To ease new deployments, we are maintaining a Kubernetes Helm chart.Documentation Index

Fetch the complete documentation index at: https://docs.speckle.systems/llms.txt

Use this file to discover all available pages before exploring further.

This setup is not recommended for use as-is in production for a few reasons, namely:

- Application-level updates: We tend to move quite fast, and if things get busy, blink twice, and you’re on an outdated server. This has security implications, too.

- Database backups: Again, this is up to you, mostly based on your risk appetite when it comes to dealing with live data. We’re healthily paranoid, so we’ve set up replication, failover nodes, and PITR.

- Automatic scalability: for example, the preview service can be quite a monster; that setup can eat up all a vm’s resources, and starve other processes, causing general system-wide instability. Cloud providers can provide horizontal VM auto-scaling, and Kubernetes can provide horizontal pod auto-scaling, but these are not discussed in this guide.

- Monitoring: this setup does not describe integrations for telemetry, metrics, tracing, or logging. Nor does it describe alerting or response actions.

- Firewall and network hardening: running in production requires additional security hardening measures, particularly protecting the network from intrusion or data exfiltration.

Prerequisites

- [Required] A DigitalOcean account. Please note that this guide will create resources that may incur a charge on DigitalOcean.

- [Required] Helm CLI installed.

- [Required] Kubernetes CLI client installed.

- [Required] A domain name, or a sub-domain to which a DNS A Record can be added.

- [Optional] An email service provider account of your choice (to allow the server to send emails)

- [Optional] An authentication service of your choice (to allow the server to authenticate users), otherwise username & password authentication will be used.

- [Optional] The DigitalOcean CLI client is installed.

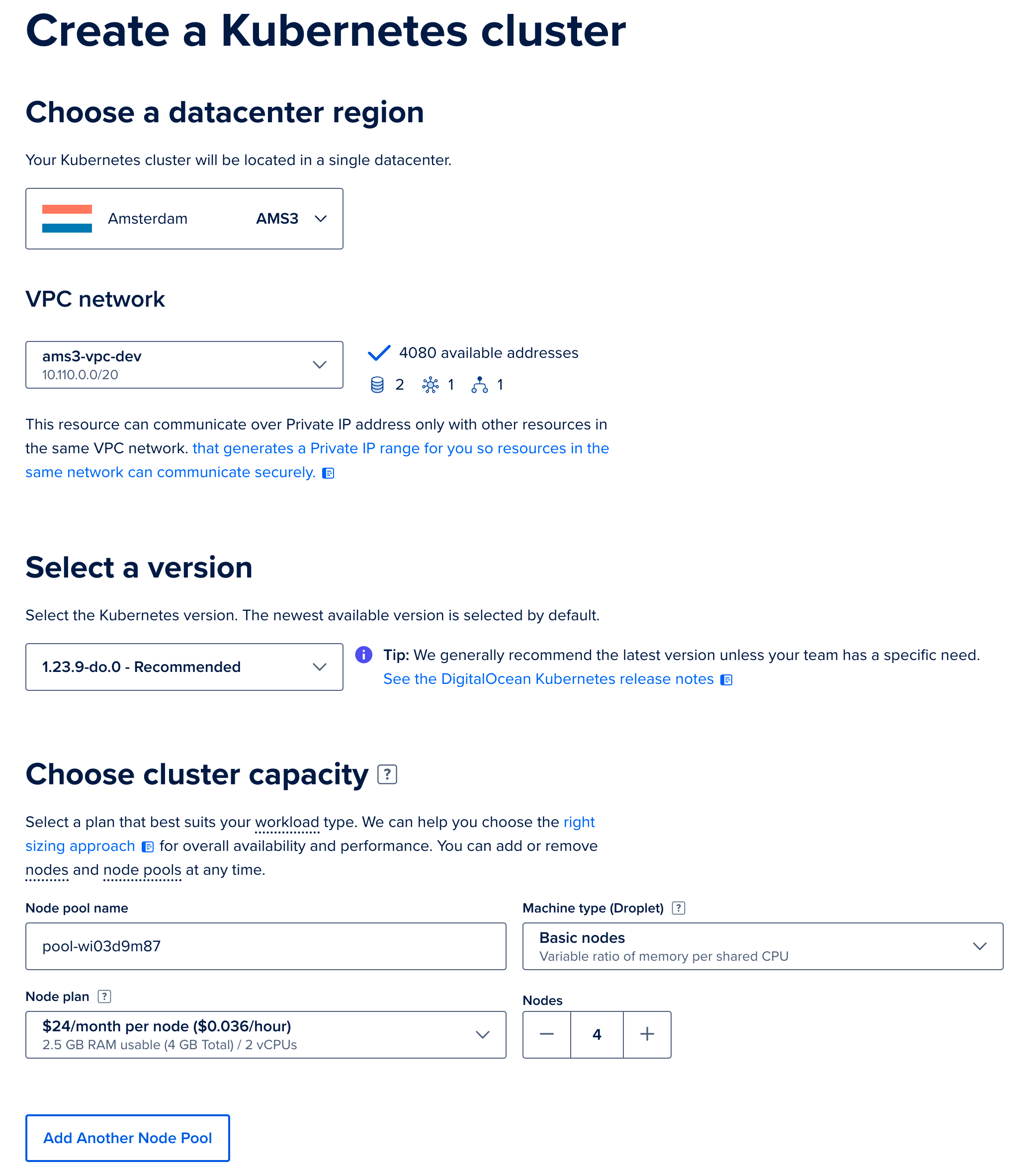

Create the Kubernetes Cluster

Go to your DigitalOcean dashboard and create a new Kubernetes cluster. We gave the cluster a name but left the configuration as per DigitalOcean’s recommended defaults. When prompted to select the node count and size, we selected four nodes. Each node has the default 2 vCPU and 4 GB (s-2vcpu-4gb). While this is a minimum, your usage may vary, and we recommend testing under your typical loads and adjusting by deploying new nodes or larger-sized machines in new node-pools.

Configure the other options for your Kubernetes cluster, then click the

Create Cluster button. After the cluster is created and initialized, you should see it in your list of kubernetes clusters:

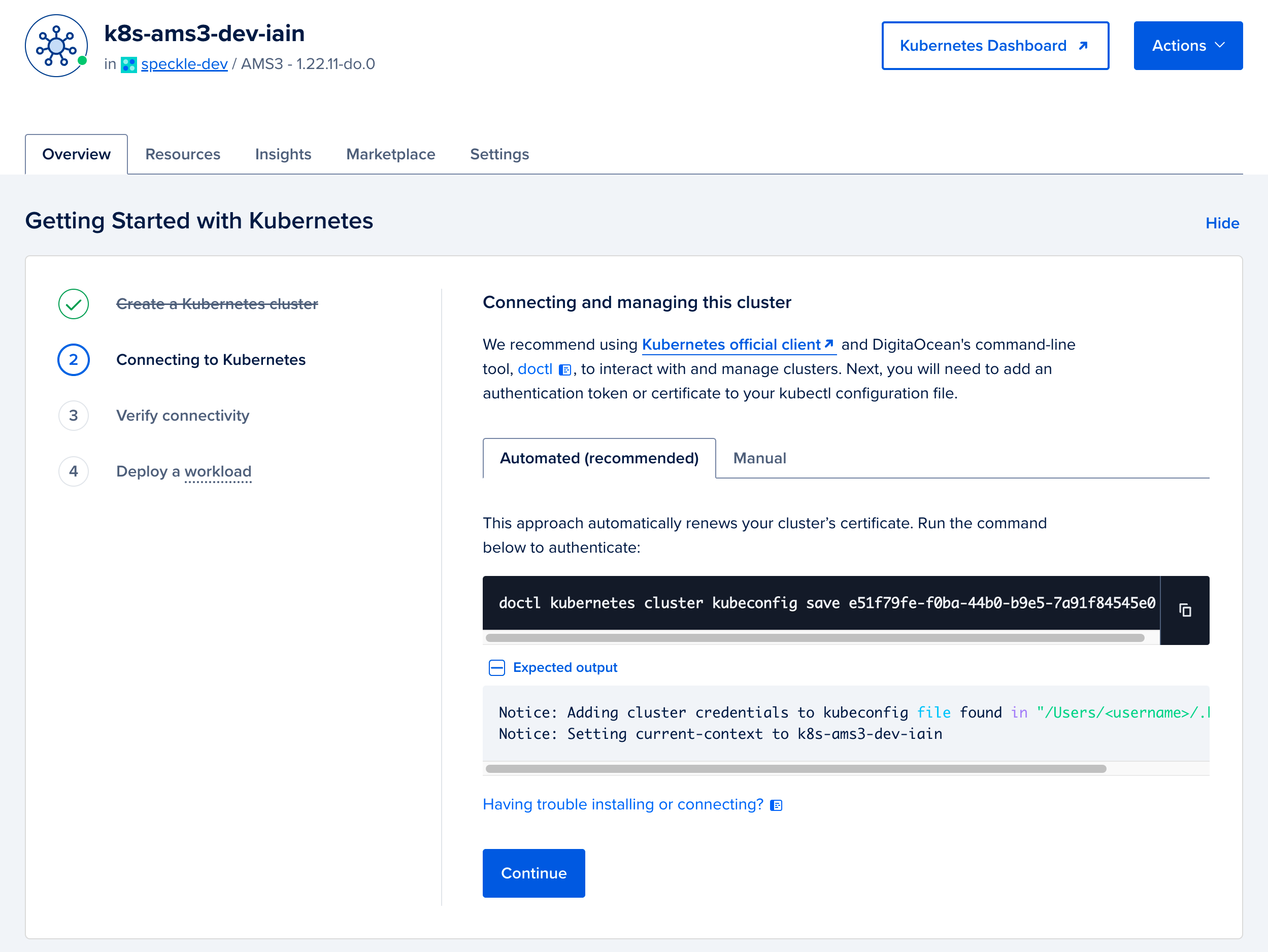

To log in to the cluster, follow the getting-started guide on the DigitalOcean dashboard for your cluster. We recommend using the automated option to update your local Kubernetes configuration (kubeconfig) with the DigitalOcean client,

doctl.

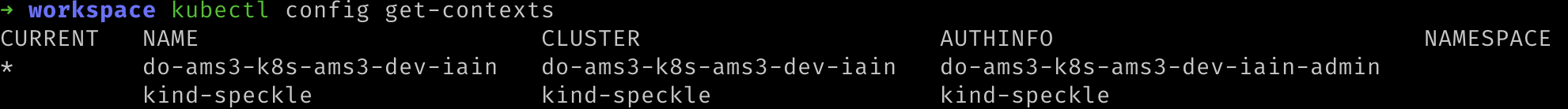

After downloading the kubernetes config, you can verify that your kubernetes client has the cluster configuration by running the following command. A list of kubernetes clusters will be printed; your cluster context should have the prefix

do-. Make a note of the name, you will use this in place of ${YOUR_CLUSTER_CONTEXT_NAME} in most of the following steps of this guide.

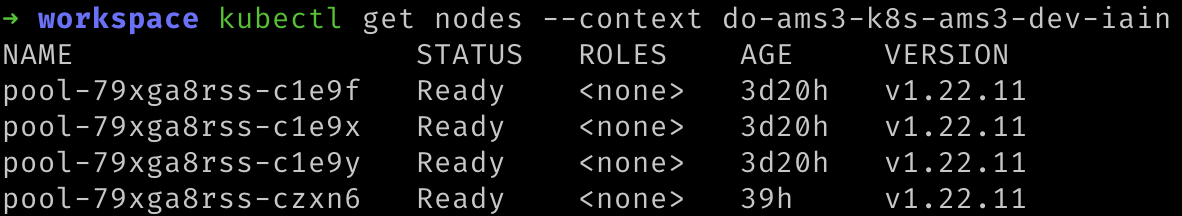

Verify that you can connect to the cluster using kubectl by running the following command to show the nodes you have provisioned. Remember to replace You should see something like the following:

${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.

(Optional): Configure Valkey

Speckle requires a Valkey cache to function. You can provide your own if you have an existing database. Otherwise, follow the steps below to create a new Valkey database on DigitalOcean.-

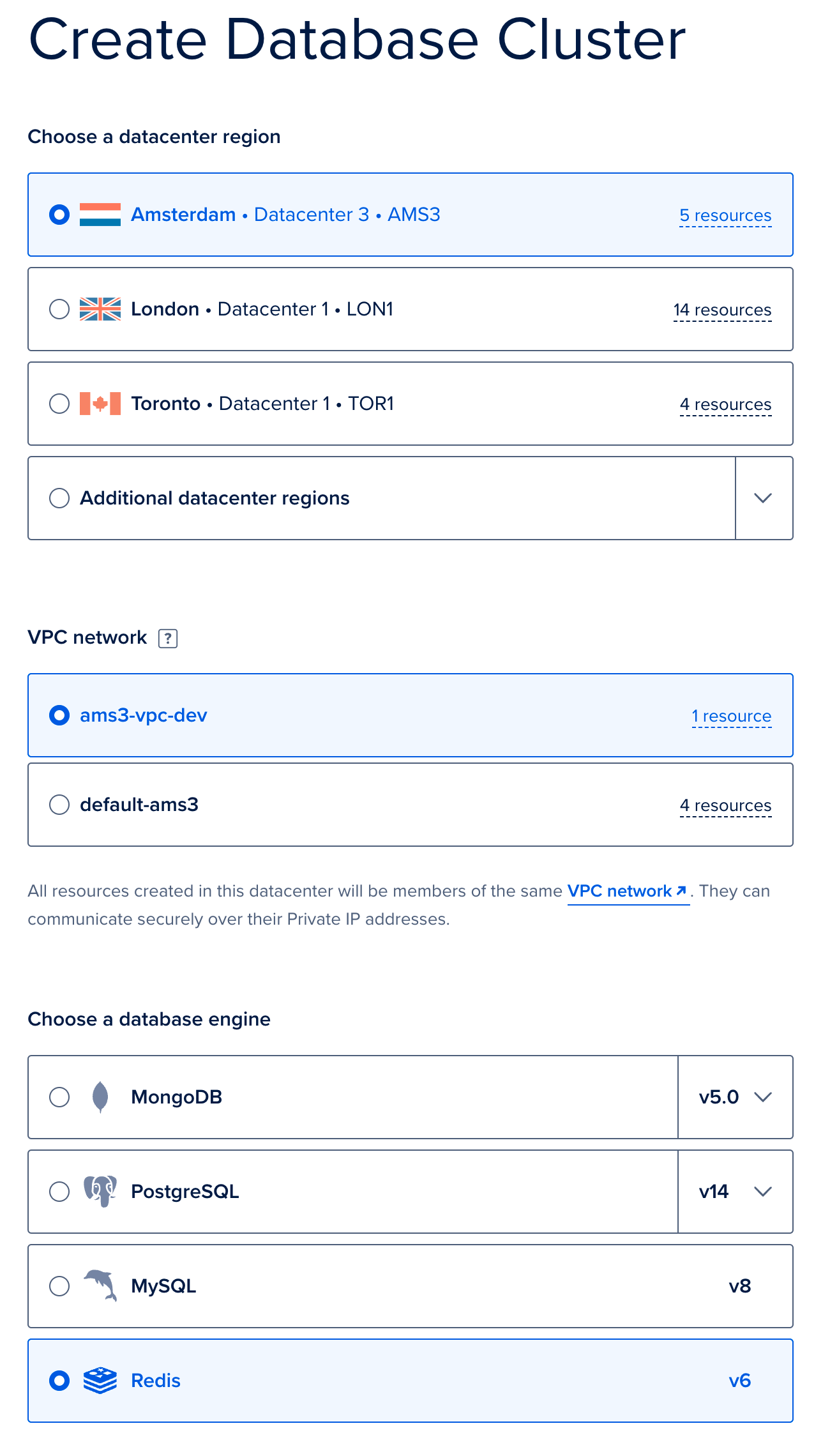

We will deploy a managed Valkey provided by DigitalOcean. Go to the new Database creation page. Firstly, select the same region and VPC as you used when deploying your Kubernetes cluster, and select Valkey. Provide a name, and click

Create Database Cluster. Again, we used the default sizes, but your usage will vary, and we recommend testing under your typical loads and adjusting as needed based on the database size.

-

From the overview, click on

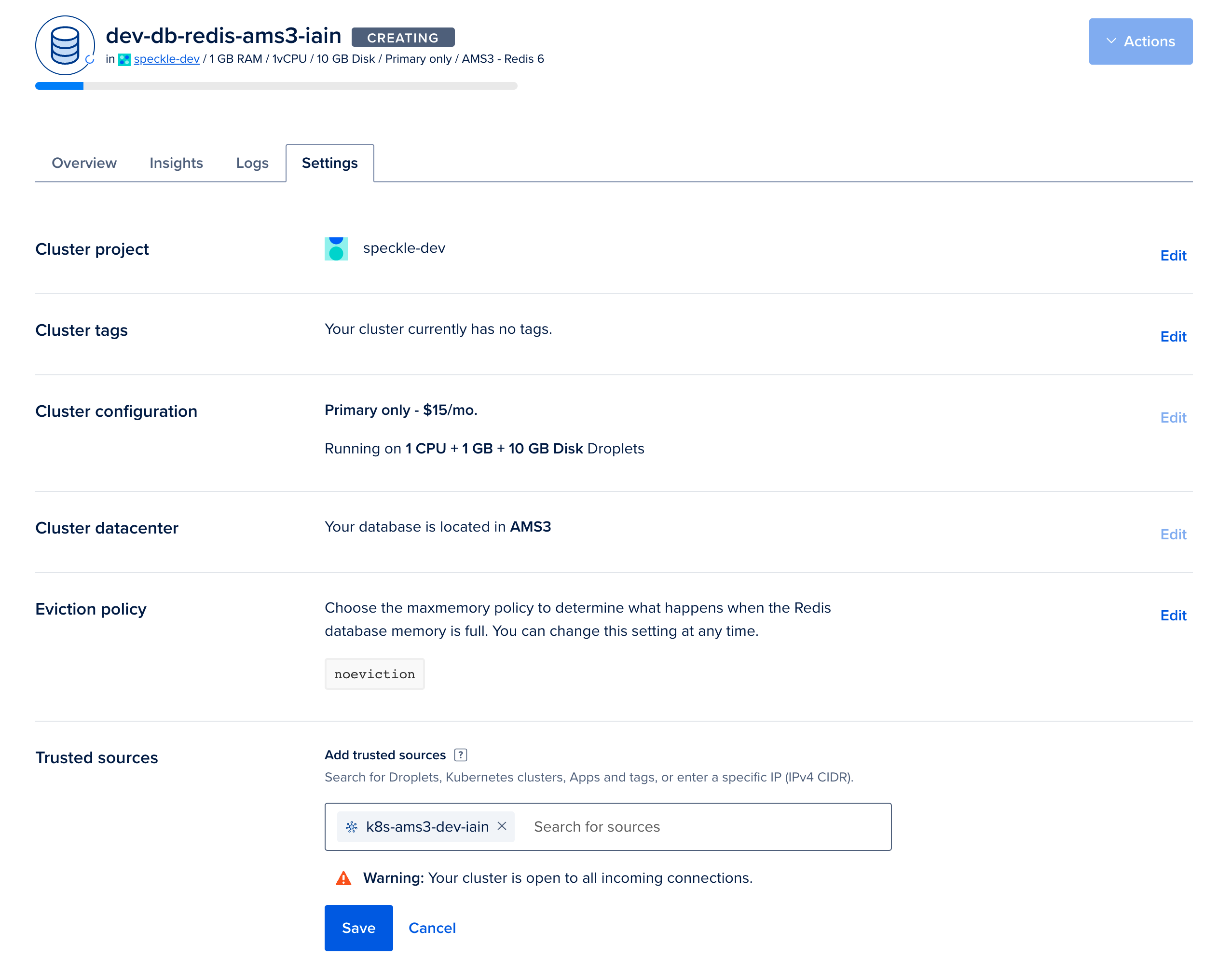

Secure this database cluster by restricting access.This will take you to the Trusted Sources panel in the Settings tab. Here, we will improve the security of your database by only allowing connections from your Kubernetes cluster. Type the name of your Kubernetes cluster and add it as a Trusted Source.

-

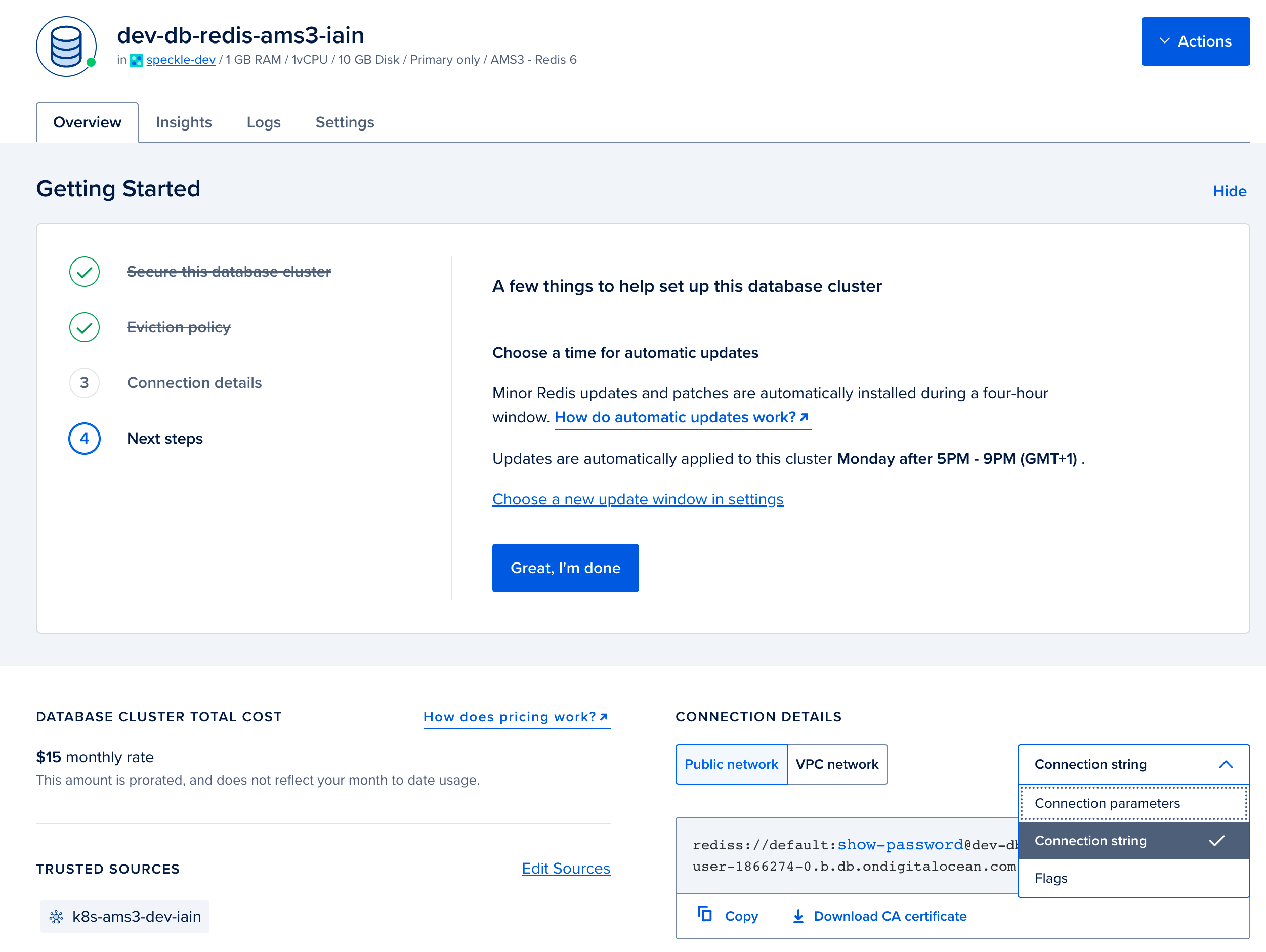

In the Overview tab for your Valkey database. Select

connection stringfrom the dropdown, and copy the displayed Connection String. You will require this when configuring your deployment in step 4.

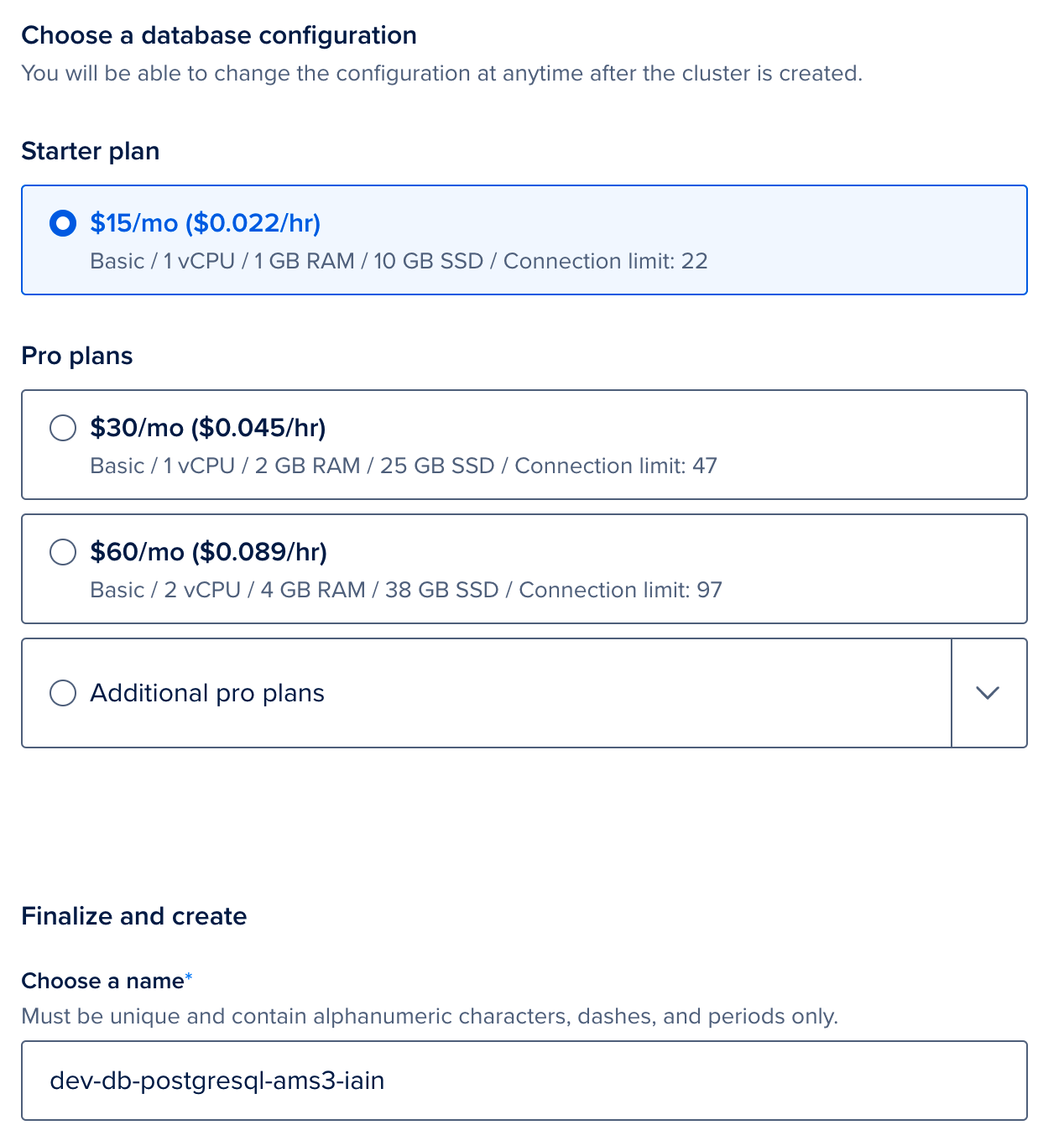

(Optional): Configure Postgres

Speckle requires a Postgres database to function. You can provide your own if you have an existing database. Otherwise, follow the following steps to create a new Postgres database in DigitalOcean.-

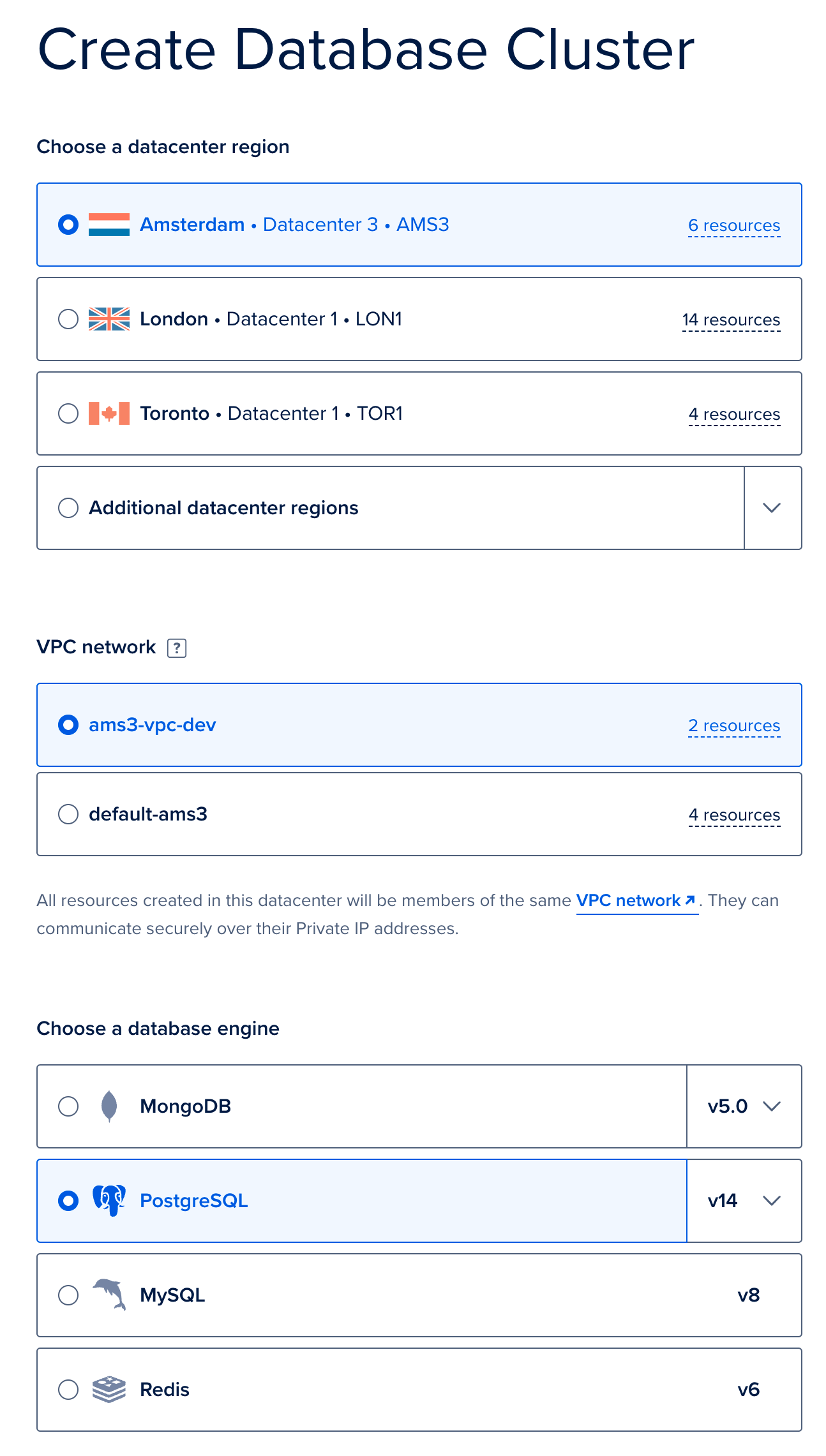

We will now deploy a managed Postgres provided by DigitalOcean. Go to the new Database creation page. Firstly, select the same region and VPC as you used when deploying your Kubernetes cluster, and then select Postgres. Provide a name, and click

Create Database Cluster. Again, we used the default sizes, but your usage will vary, and we recommend testing under your typical loads and adjusting as needed based on the database size.

-

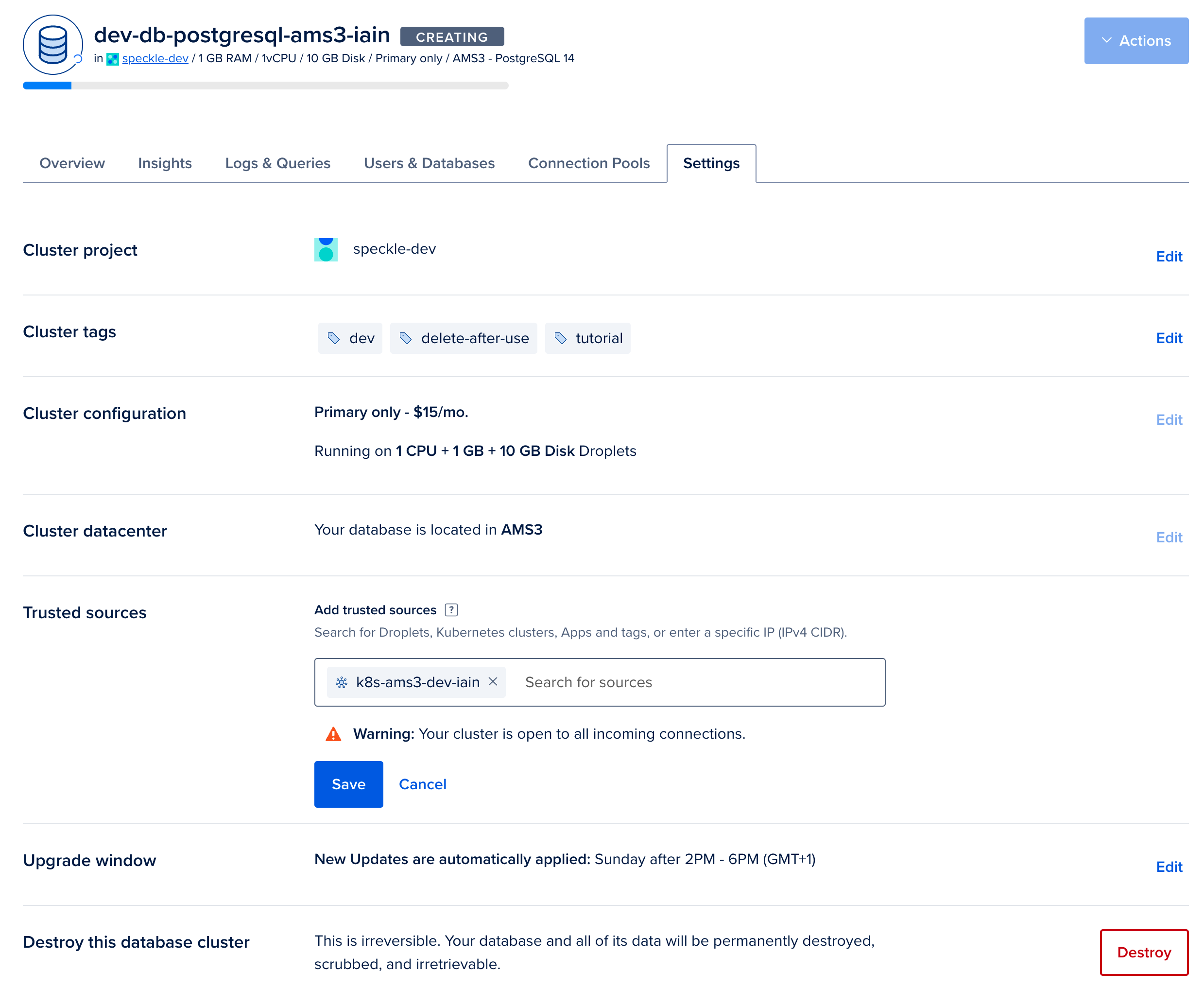

From the overview page for your Postgres database, click on

Secure this database cluster by restricting access. This will take you to the Trusted Sources panel in the Settings tab. Here, we will improve the security of your database by only allowing connections from your Kubernetes cluster. Type the name of your Kubernetes cluster and add it as a Trusted Source.

-

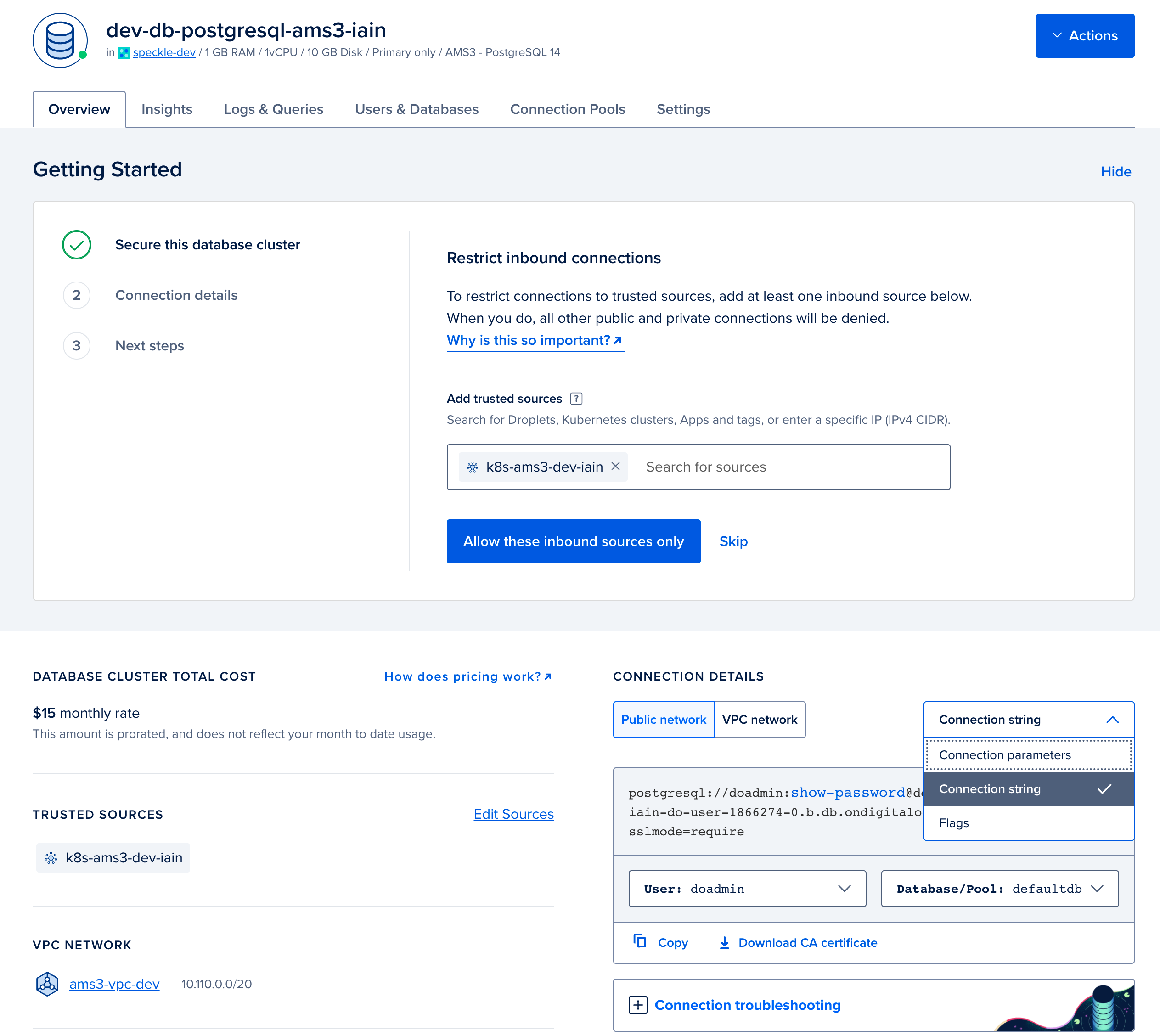

In the Overview tab for your Valkey database. Select

connection stringfrom the dropdown, and copy the displayed Connection String. You will require this for when configuring your deployment in step 4.

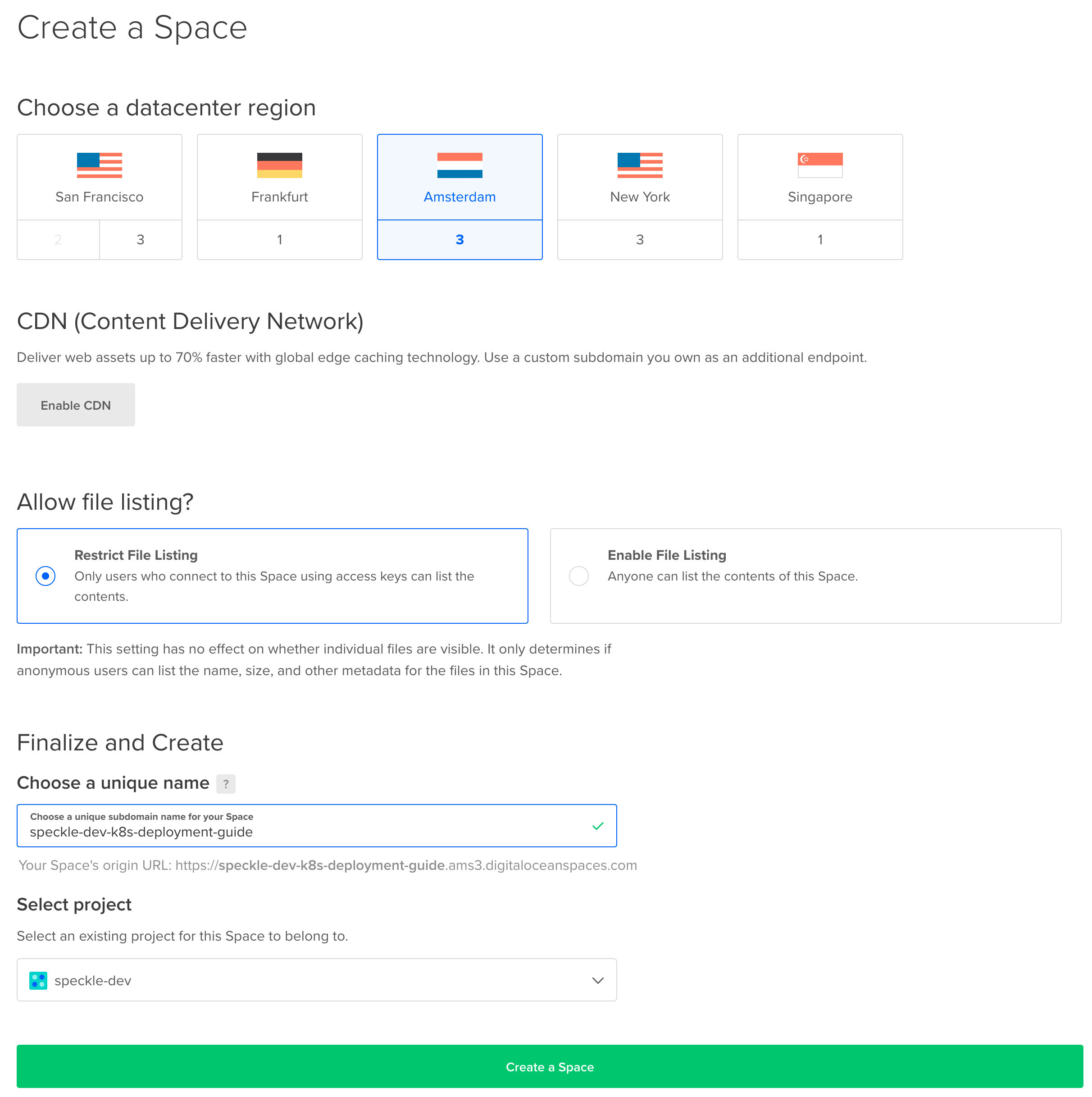

(Optional): Configure Blob Storage (DigitalOcean Spaces)

Speckle requires Blob Storage to store files and other data. You can provide your own if you have an existing blob storage which is compatible with the Amazon S3 API. Otherwise, follow the following steps to create a new S3-compatible blob storage on DigitalOcean.-

Navigate to the Create a Space page. Please select a region of your choice, we recommend the same region as you have deployed the cluster. We did not enable the CDN, and we restricted the file listing for security purposes. Please provide a name for your Space, this has to be unique in the region so please use a different name than our example. Make a note of this name; this is the

bucketvalue, which we will require when configuring your deployment in subsequent steps. Click onCreate Space.

-

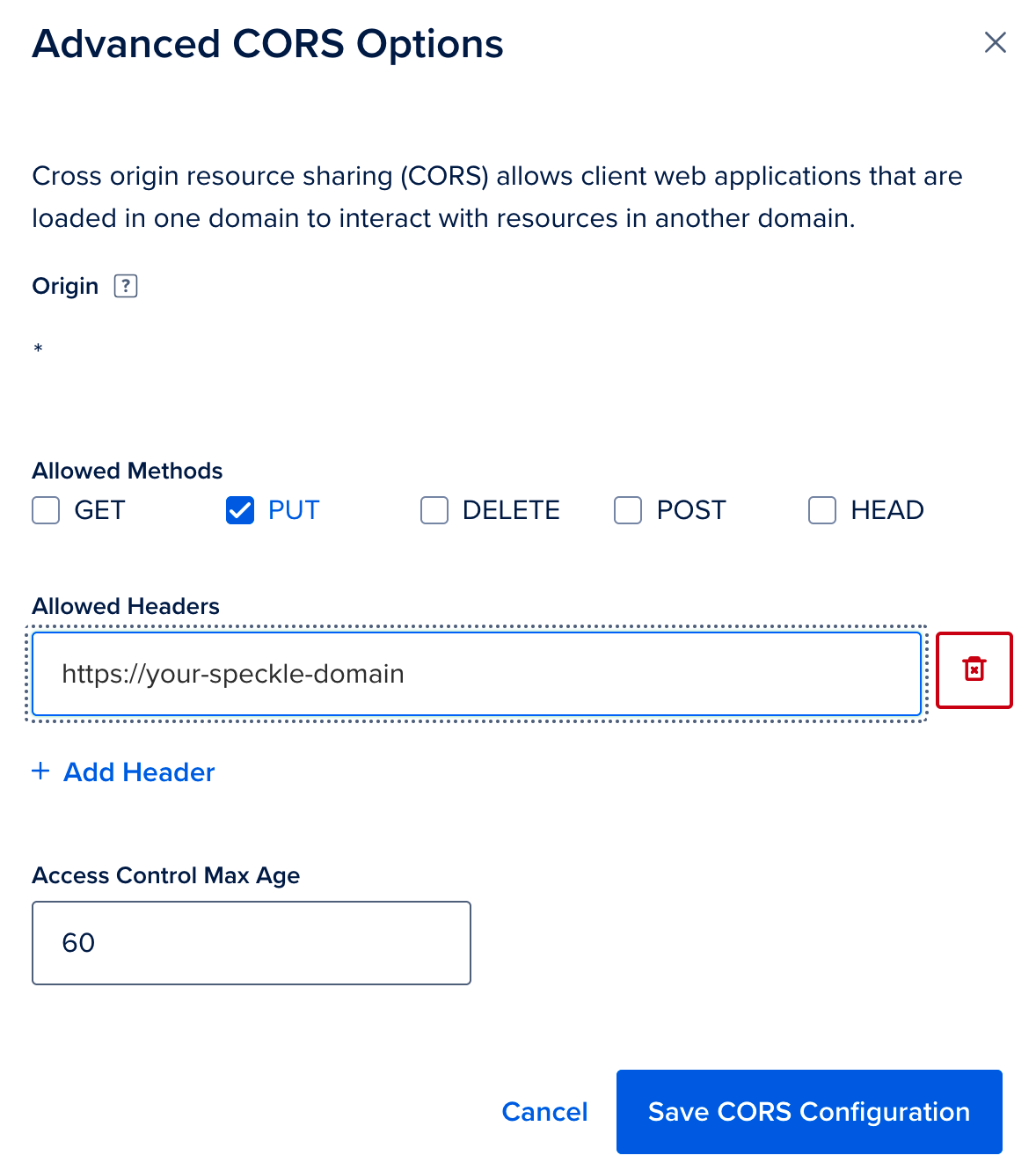

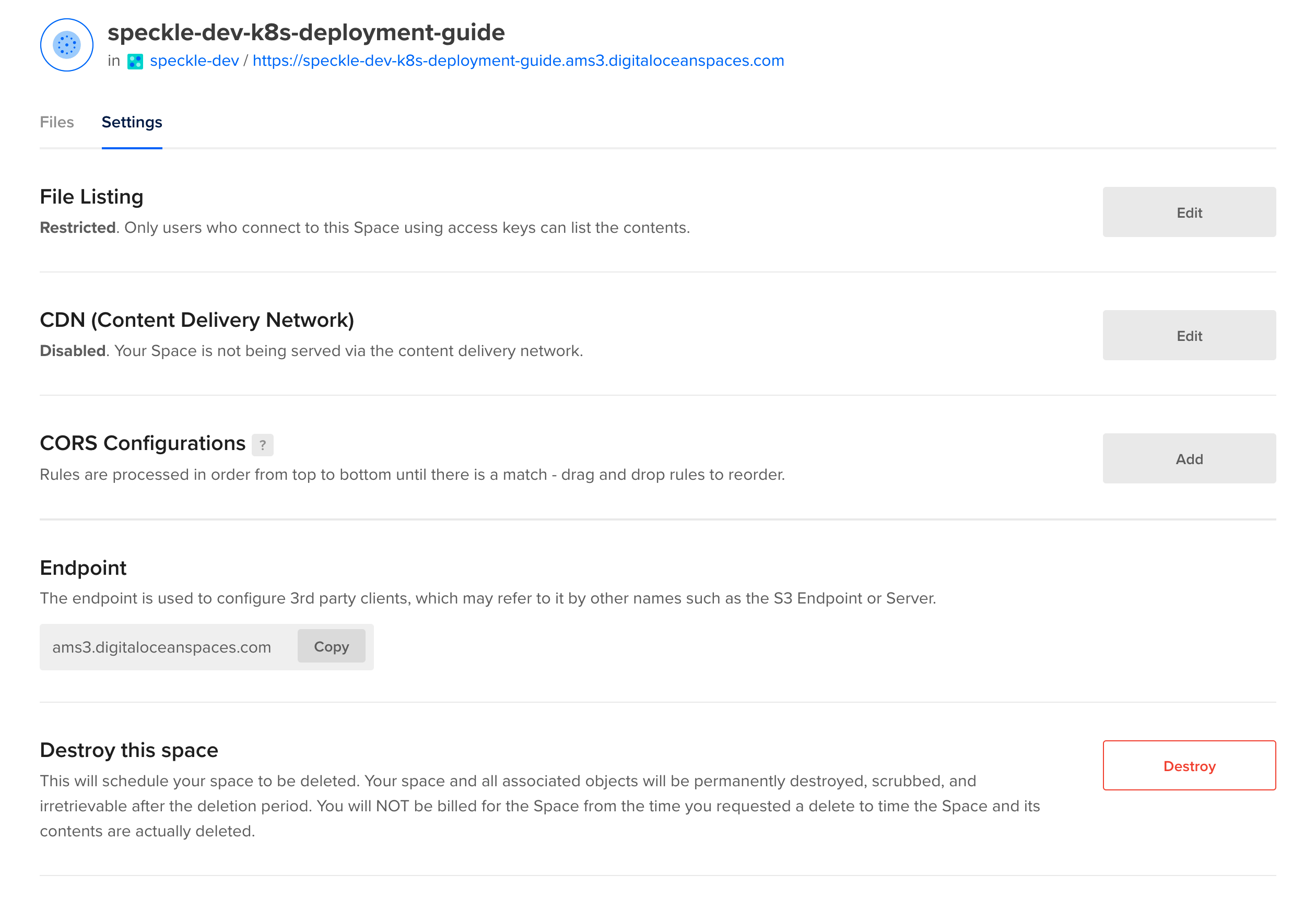

Once created, click on the

Settingstab and add aCORS Configurations. -

Add a CORS Configuration which allows

PUTrequests from your domain.

-

Now click on the

Settingstab and copy theEndpointvalue.

-

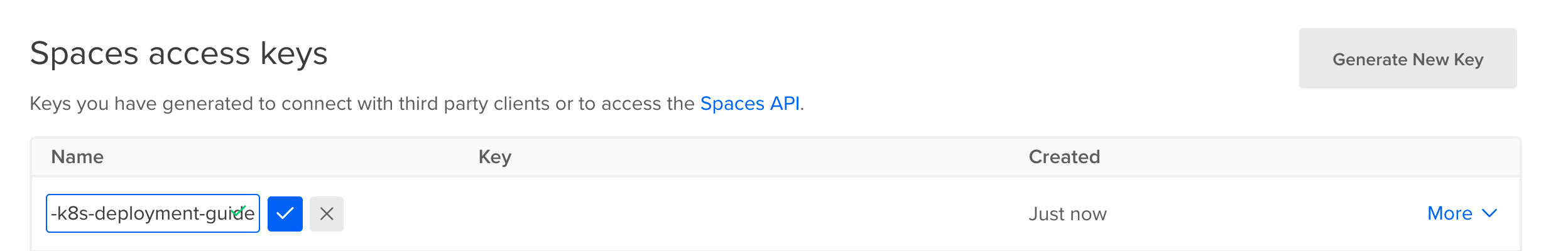

Now navigate to the API page in DigitalOcean. Next to the

Spaces access keysheading, clickGenerate New Key. You will only be able to see the Secret value once, so copy the name, the key and the secret and store this securely.

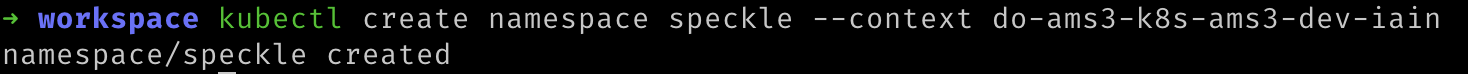

Create a Namespace

Kubernetes allows applications to be separated into different namespaces. We can create a namespace in our Kubernetes cluster with the following command. Replace${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.:

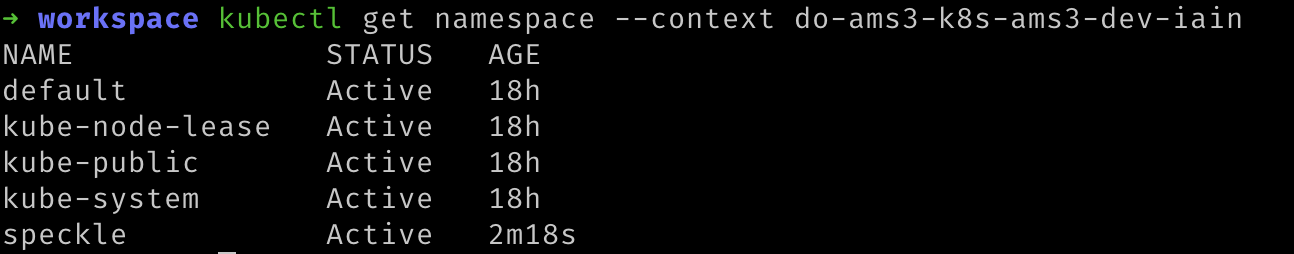

Verify that the namespace was created by running the following command. You should see a list of namespaces, including

speckle. The other existing namespaces were created by Kubernetes and are required for Kubernetes to run. Replace ${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.

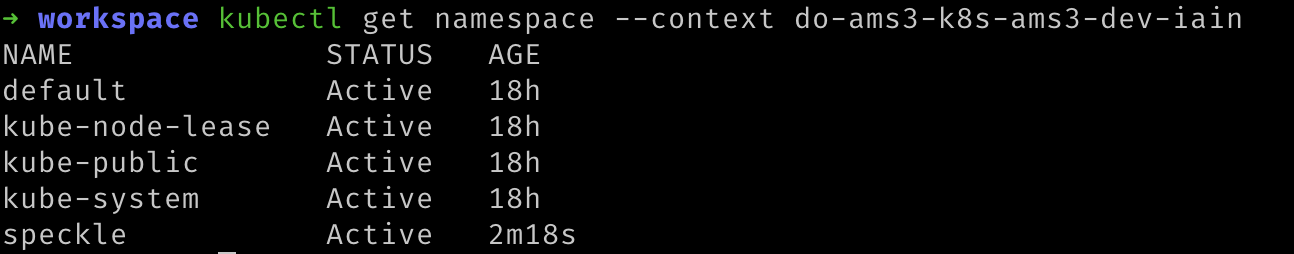

Create Secrets

To securely store the connection details for Speckle’s dependencies, we will create a secret in the Kubernetes Cluster’sspeckle namespace. Replace all the items starting with ${YOUR_...} with the appropriate value. ${YOUR_SECRET} should be replaced with a value unique to this cluster. We recommend creating a random value of at least 10 characters long.${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.:

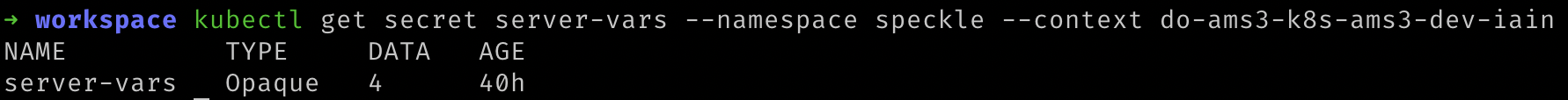

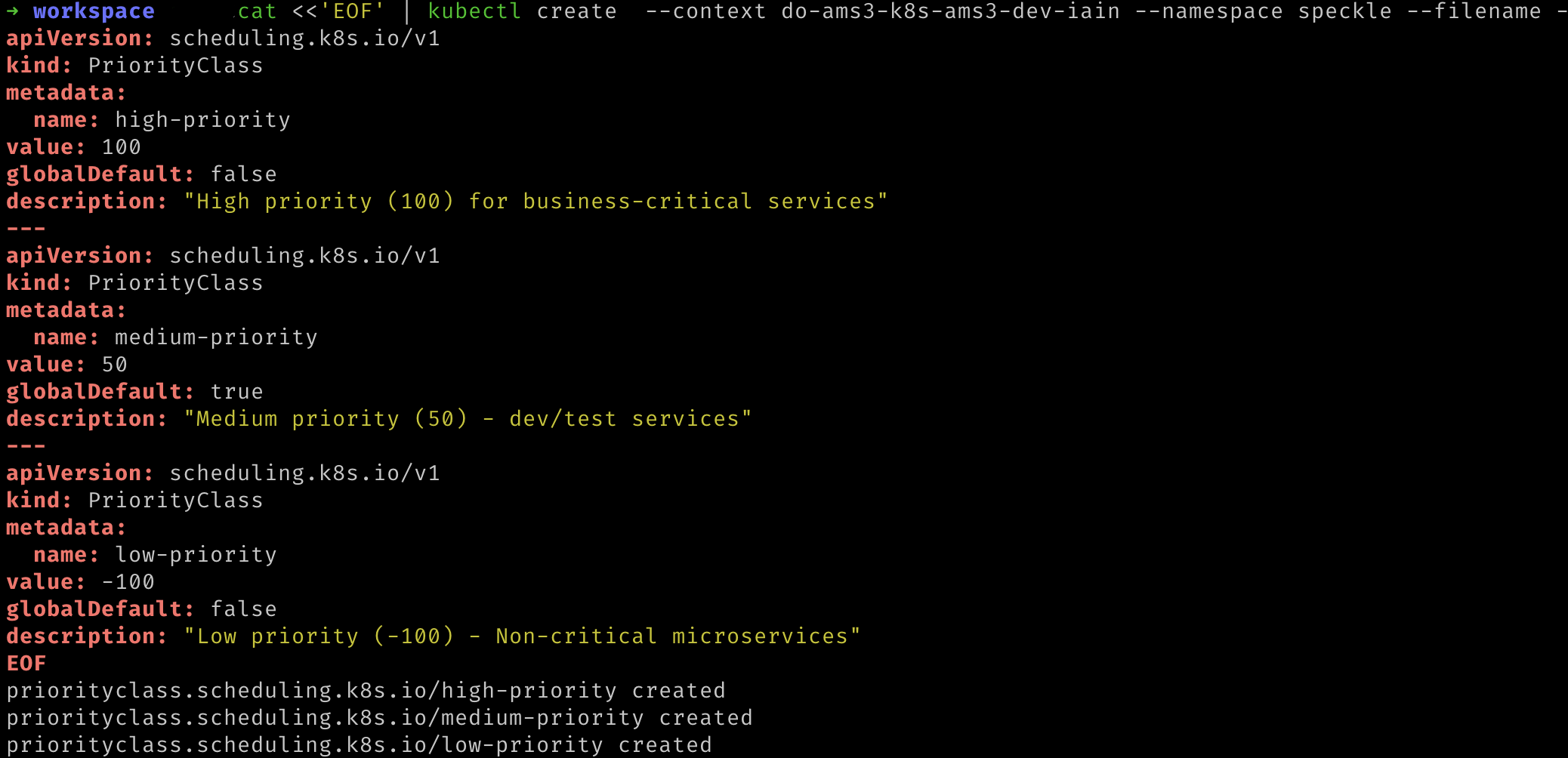

redis_url with the key you require and replacing ${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.:${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.Priority Classes

If Kubernetes ever begins to run out of resources (such as processor or memory) on a node, then Kubernetes will have to terminate some of the processes. Kubernetes decides which processes to terminate based on their priority. Here we will specify the priority that Speckle will have.Run the following command. Replace${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.:

Certificate Manager

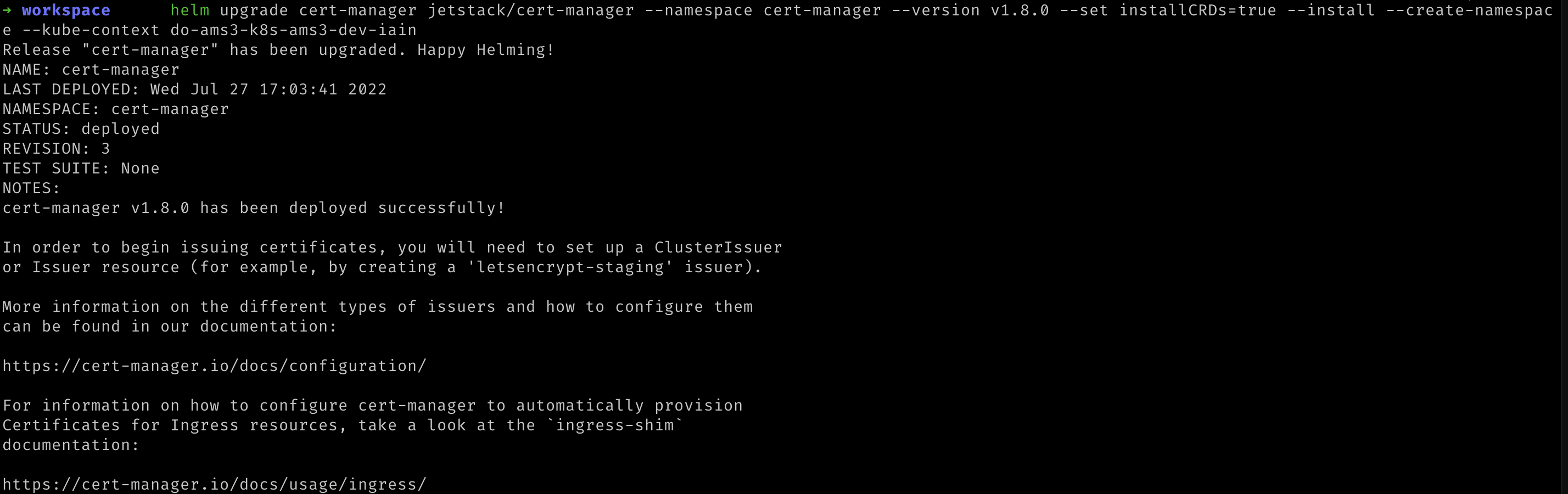

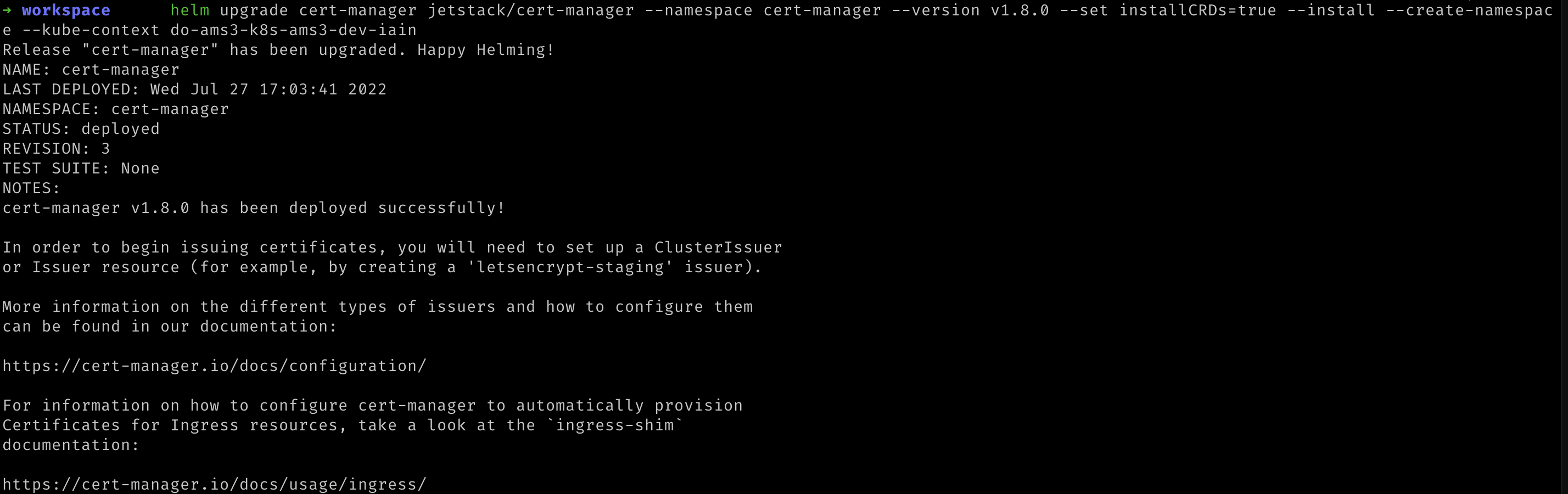

To enable secure (https) access to your Speckle server from the internet, we need to provide a means to create a TLS (X.509) certificate. This certificate must be renewed and kept up to date. To automate this, we will install CertManager and connect it to a Certificate Authority. CertManager will create a new certificate, request that the Certificate Authority sign it, and renew it when required. The Certificate Authority in our case will be Let’s Encrypt. If you are interested, you can read more about how Let’s Encrypt knows to trust your server with an HTTP-01 challenge, in our case, CertManager acts as the ACME client.We first need to let Helm know where CertManager can be found:

Deploy the CertManager Helm release with the following command. Replace

${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.

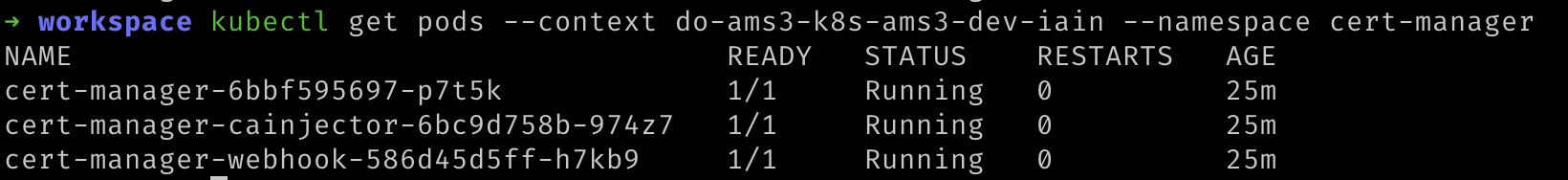

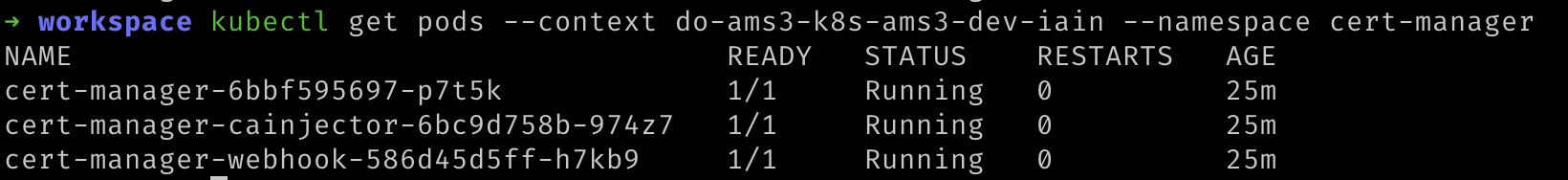

We can verify that this was deployed to Kubernetes with the following command. Replace

${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.:

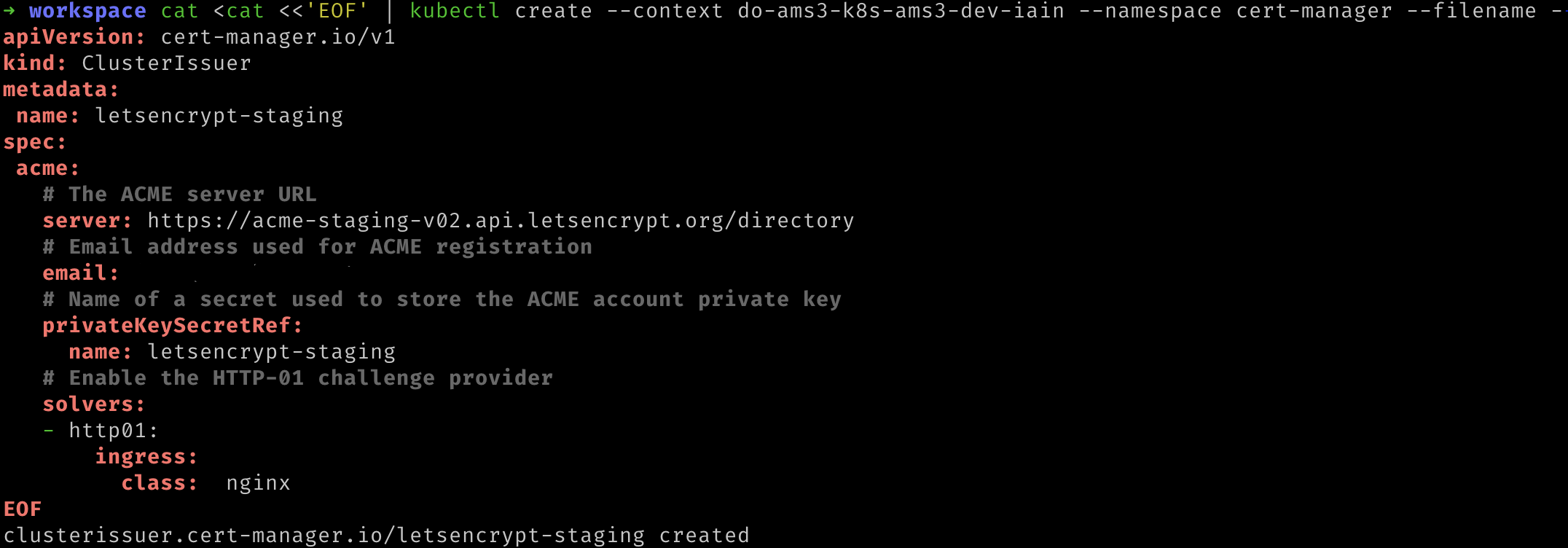

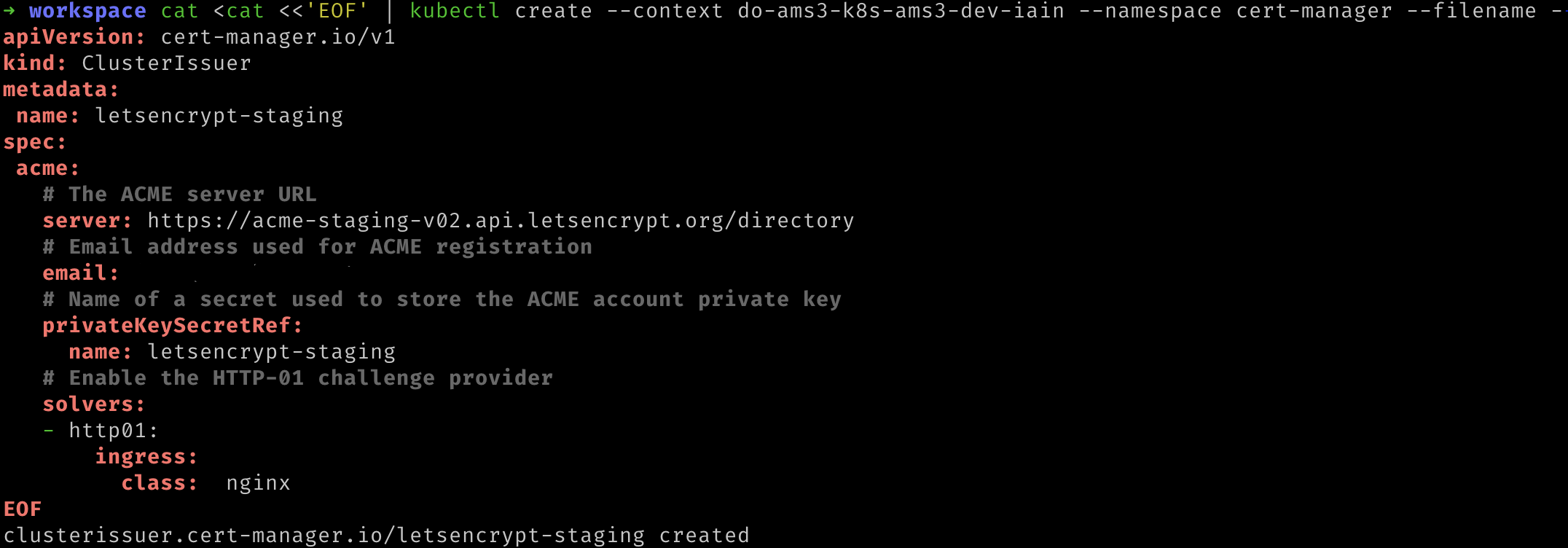

We now need to tell CertManager which Certificate Authority should be issuing the certificate. We will deploy a CertIssuer. Run the following command, replacing

${YOUR_EMAIL_ADDRESS} and ${YOUR_CLUSTER_CONTEXT_NAME} with the appropriate values.

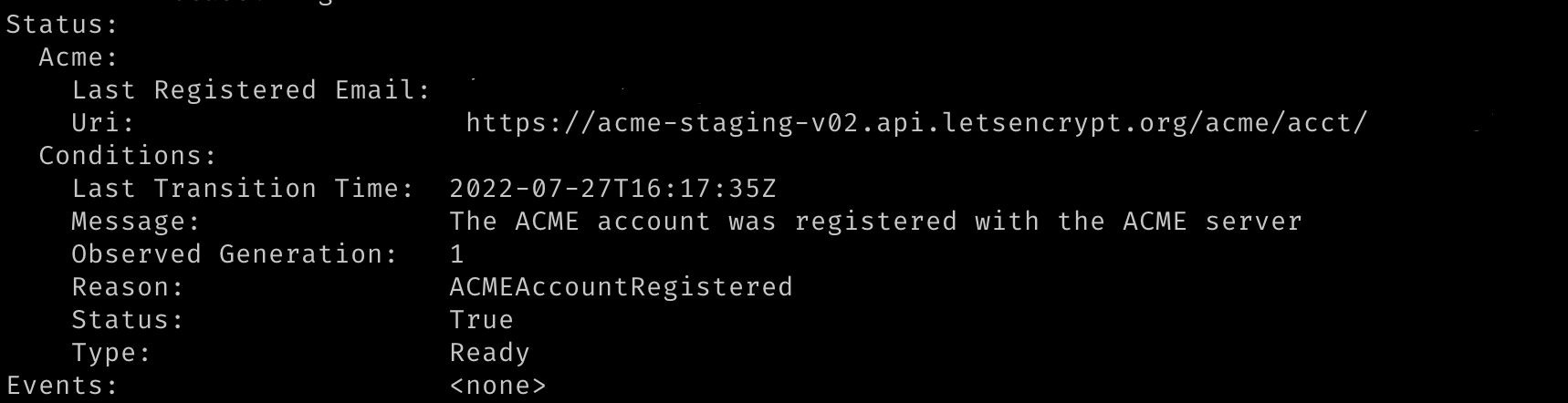

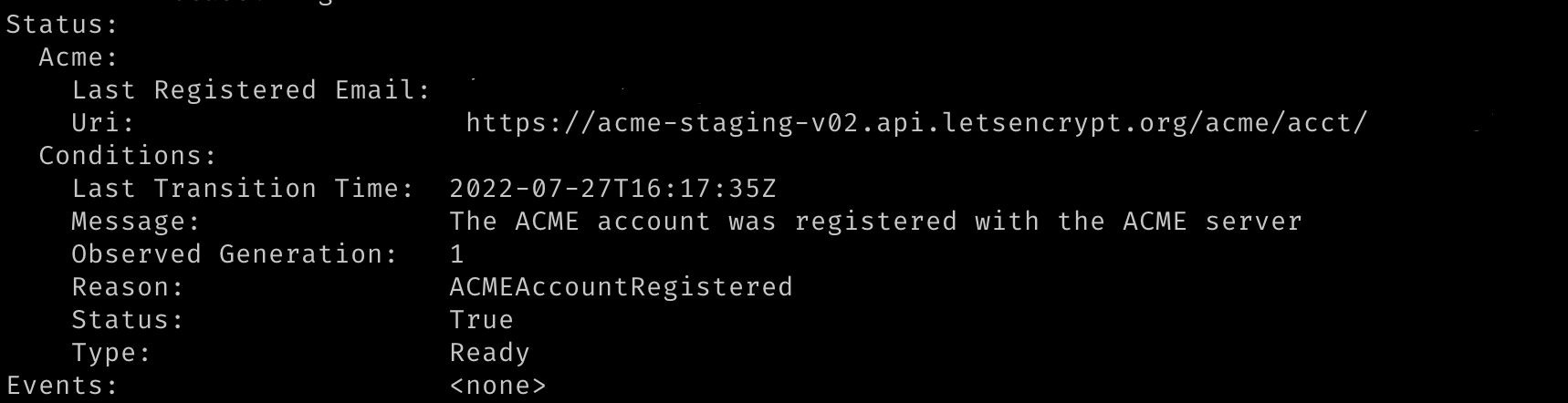

We can verify that this worked by running the following command. Replace

${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster. The response should state that the message was “The ACME account was registered with the ACME server”.

We repeat this command to verify that the production certificate was created as well. Replace

${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster. Again, the response should state that the message was “The ACME account was registered with the ACME server”.Ingress

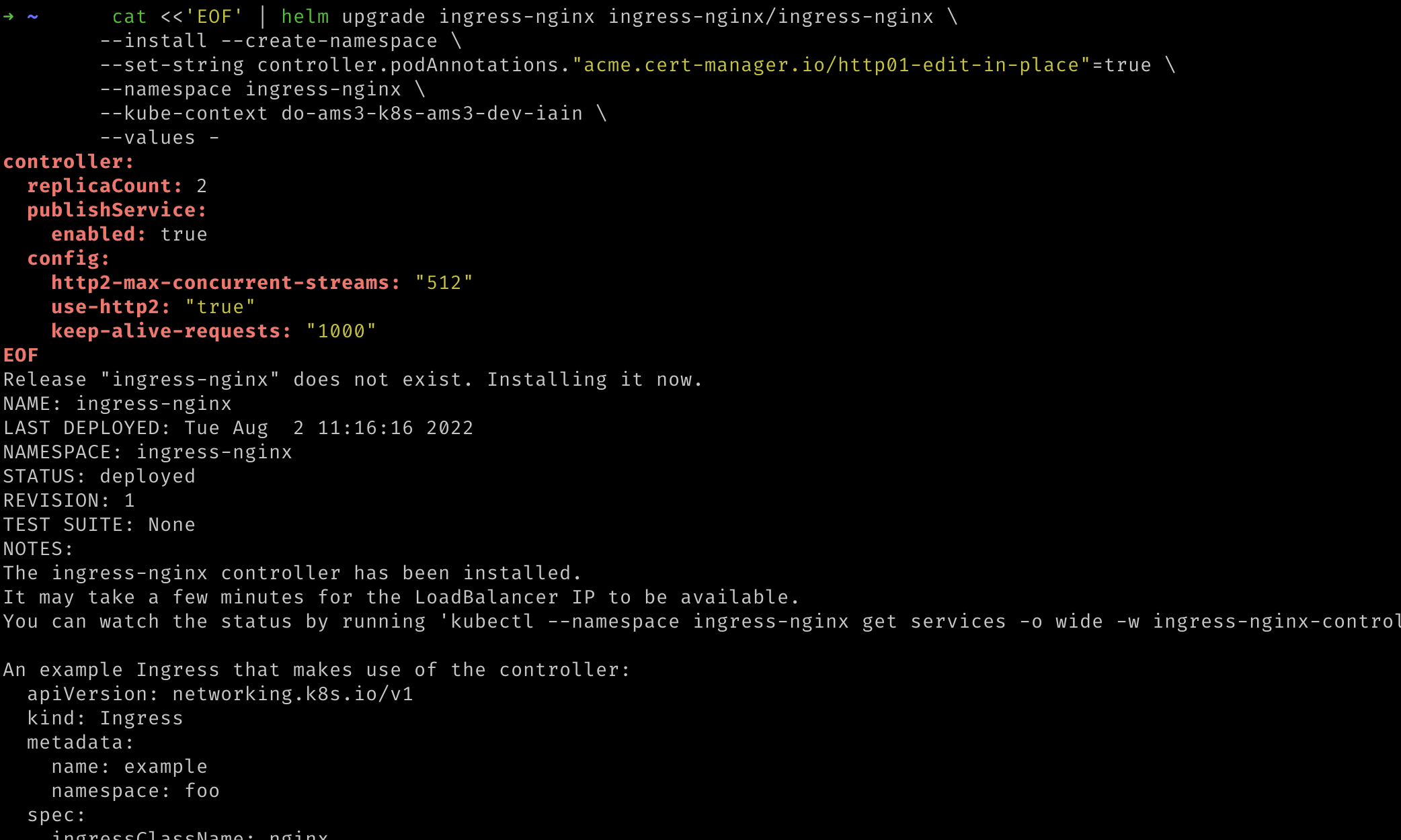

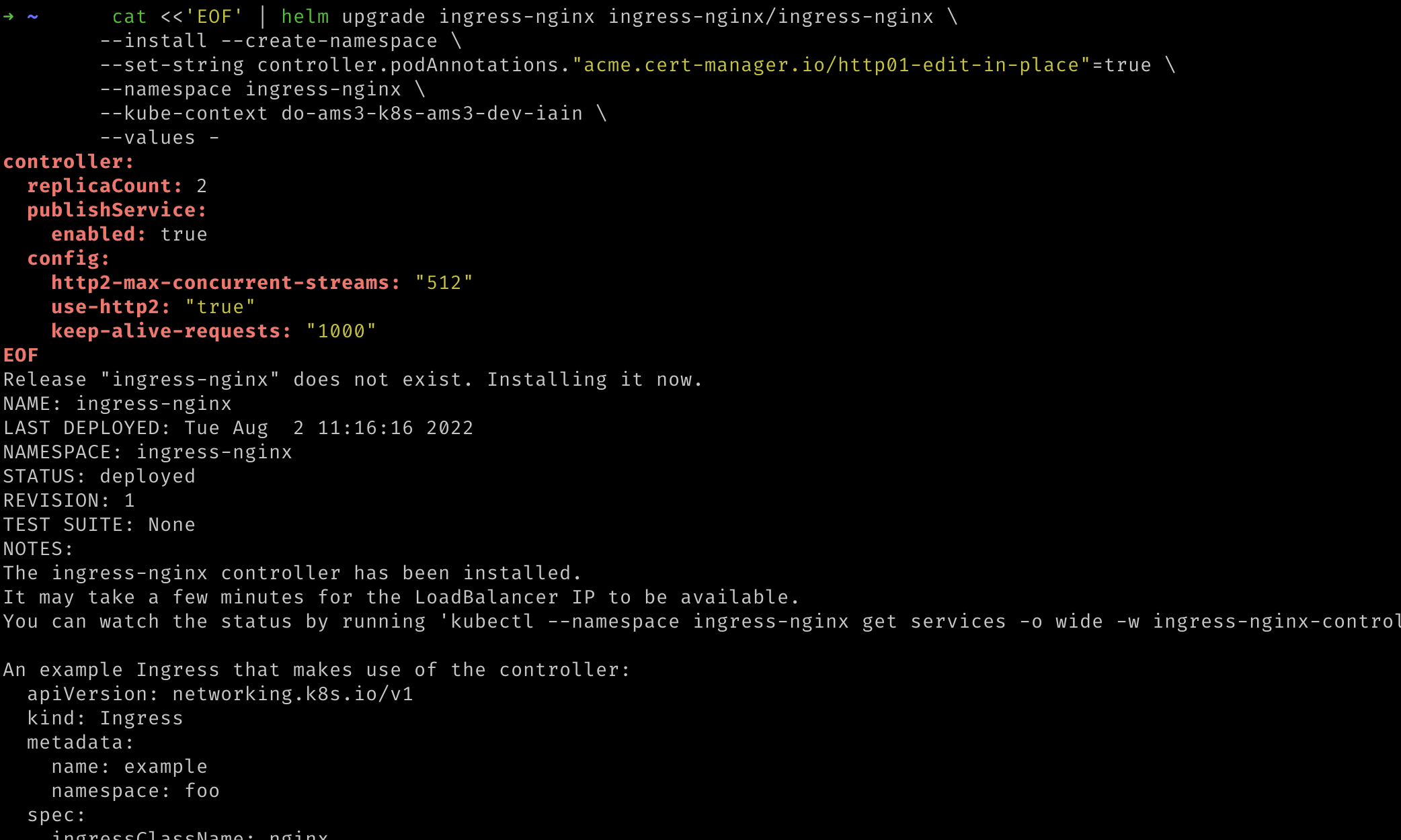

To allow access from the internet to your kubernetes cluster, Speckle will deploy a Kubernetes Ingress, which defines how that external traffic should be managed. The component that manages the traffic per Speckle’s ingress definition is known as an Ingress Controller. In this step, we will deploy our Ingress Controller, NGINX.- We first let Helm know where NGINX ingress can be found:

Now we can deploy NGINX to our kubernetes cluster. The additional annotation allows CertManager, deployed in the previous step, to advise NGINX which certificate to use for https connections. Replace

${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.

We can ignore the instructions printed out by the NGINX Helm chart as the

required resources will be provided by the Speckle Helm chart.

Configure your Deployment

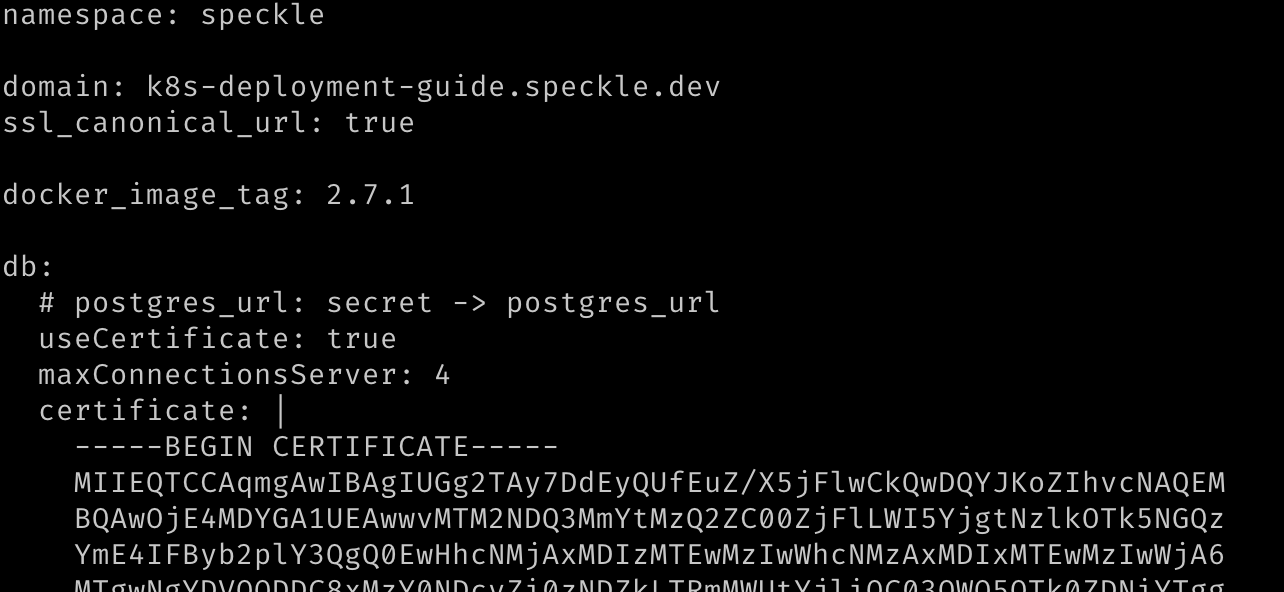

Download thevalues.yaml file from the Speckle server GitHub repository and save it as values.yaml to the current directory on your local machine. We will edit and use this file in the following steps.- Fill in the requested fields and save the file:

namespace: required, we are usingspecklein this guide, so change this valuedomain: required, this is the domain name at which your Speckle server will be available.db.useCertificate: required, this should be set to true and will force Speckle to use the certificate for Postgres we shall provide indb.certificate.db.certificate: required, this can be found by clickingDownload CA certificatein your database’s overview page on DigitalOcean. You can find your Postgres database by selecting it from the Database page on DigitalOcean. When entering the data, please use Helm’s pipe operator for multiline strings and be careful with indentation. We recommend reading Helm’s guide on formatting multiline strings, and refer to the image below for an example of this format.s3.endpoint: required, the endpoint can be found in the Settings Page of your DigitalOcean Space. You can find your Space by selecting it from the Spaces page on DigitalOcean. This value must be prepended withhttps://.s3.bucket: required, this is the name of your DigitalOcean space.s3.access_key: required, this is theKeyof your Spaces API key. You can find this by viewing it from the Spaces API Key page on DigitalOceans3.auth.local.enabled: this is enabled by default. This requires users to register on your Speckle cluster with a username and password. If you wish to use a different authorization provider, such as Azure AD, GitHub, or Google, set this value tofalseand amend the relevant section below by enabling it and providing the necessary details.server.email: optional, enabling emails will enable extra features like sending invites.- You will need to set

server.email.enabledtotrue. - Please set

server.email.host,server.email.username, and optionally, depending on your email server,server.email.port - This also requires the

email_passwordsecret to have been set in Step 3.

- You will need to set

cert_manager_issuer: optional, the default is set for Let’s Encrypt staging apiletsencrypt-staging. For production, or if you encounter an issue with certificates, change the value toletsencrypt-prod.

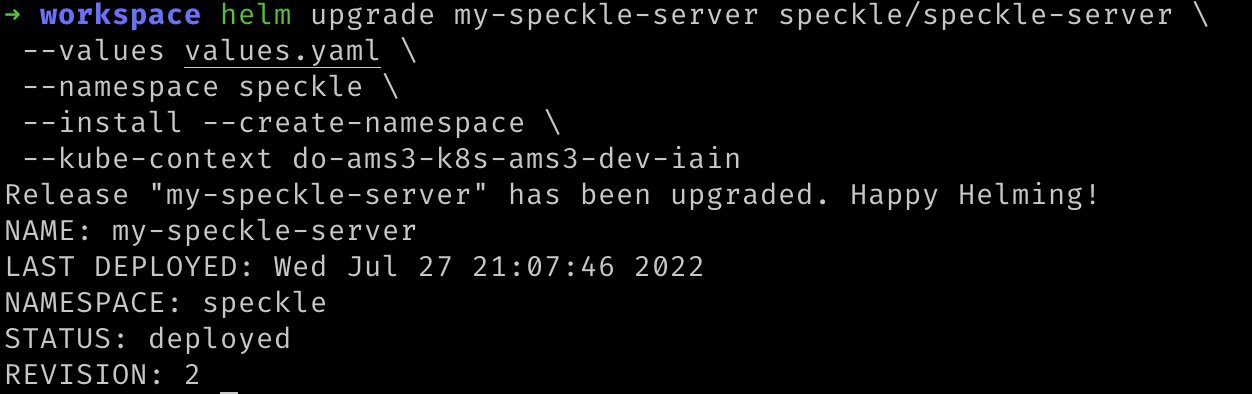

Deploy Speckle to Kubernetes

Run the following command to deploy the Helm chart to your Kubernetes cluster configured with the values you configured in the prior step. Replace${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.

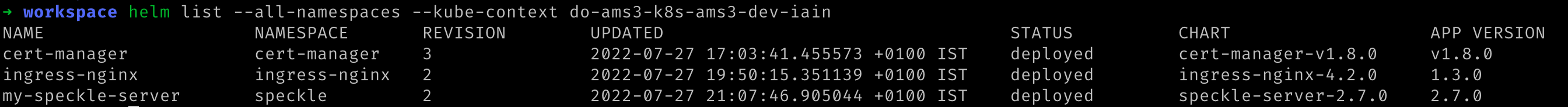

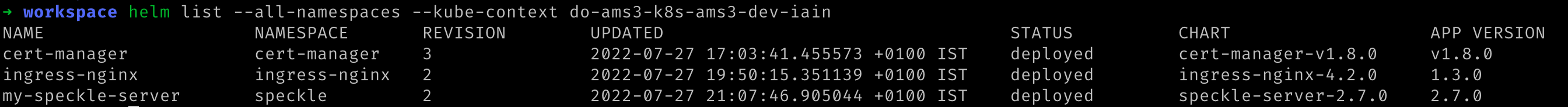

Verify that all deployed Helm charts were successful by checking their deployment status. Replace You should see something similar to the following:

${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.:

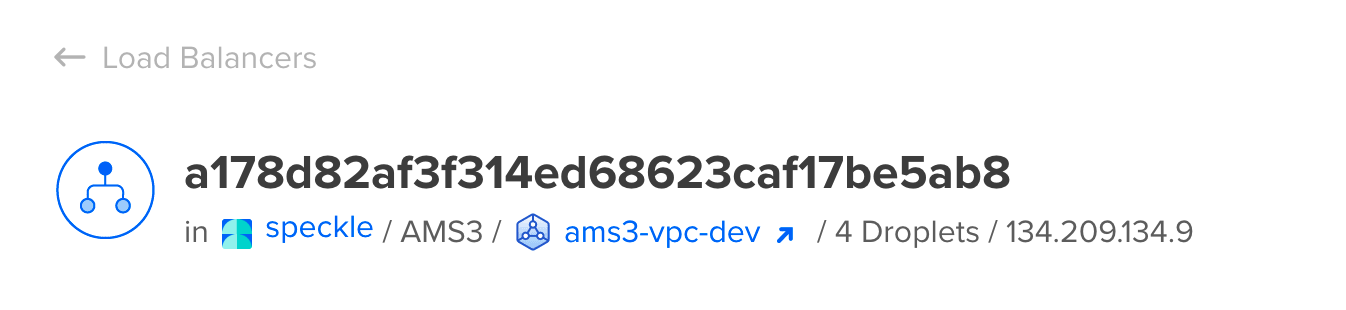

Update your Domain

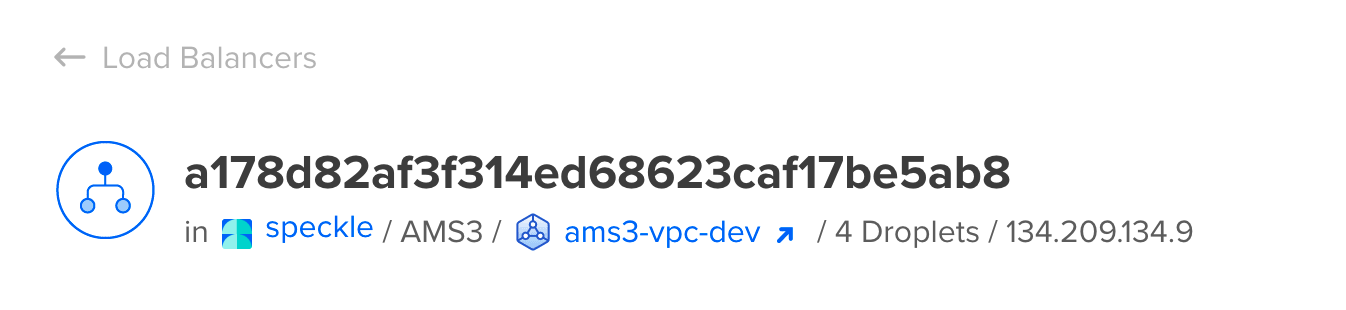

Initially, accessing Speckle may take some time as DigitalOcean must create a load balancer and Let’s Encrypt must sign the Certificate. The DigitalOcean load balancer was automatically requested from the Infrastructure provider (DigitalOcean) by the Ingress controller we deployed earlier. You can see the progress of the load balancer’s deployment on the Networking page of your DigitalOcean dashboard.Once the load balancer has finished creating, DigitalOcean will display an externally-accessible IP address for it. Please make a note of the IP address.

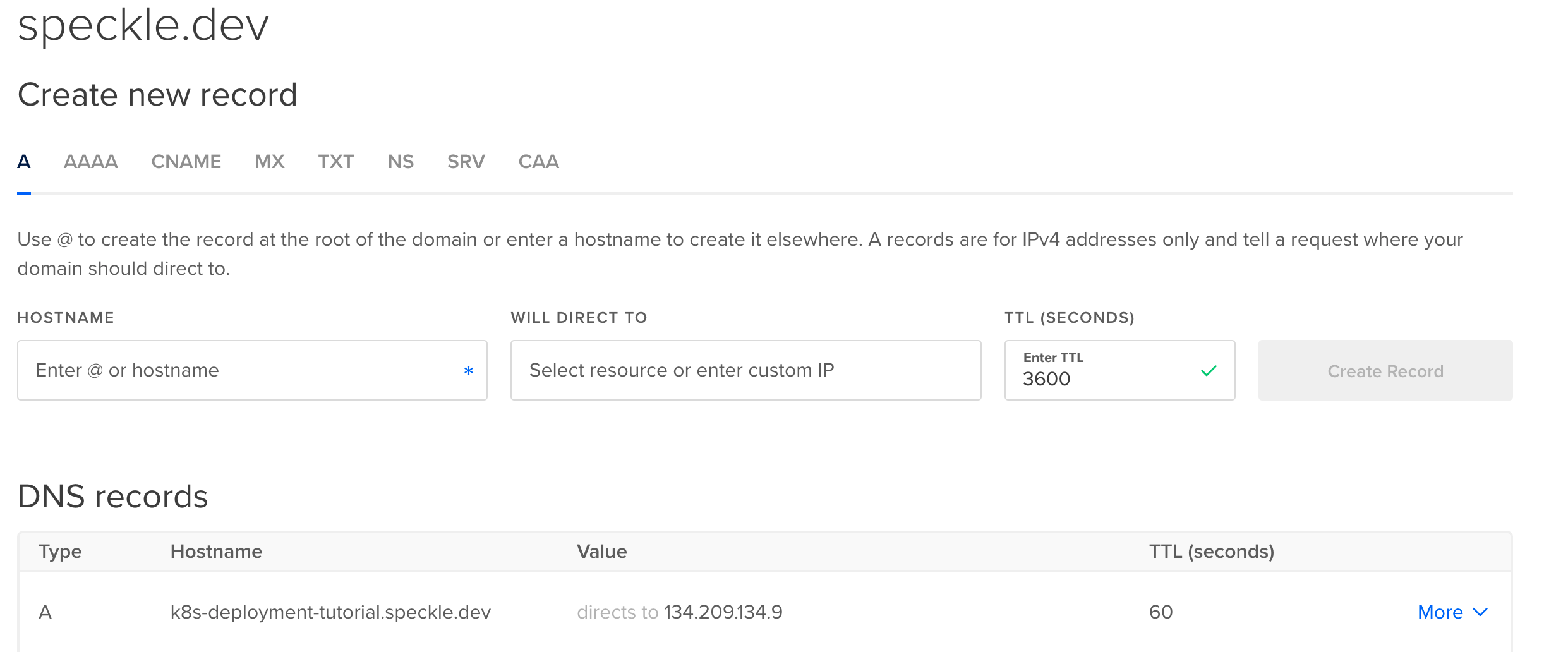

Navigate to your domain registrar’s website for your domain name and add a DNS A record. This will allow web browsers to resolve your domain name to the load balancer’s IP address. The domain must match the domain name provided to Speckle in the

values.yaml file you edited previously. If DigitalOcean manages your Domain Names, adding a DNS A record using DigitalOcean’s Domain page will look something like the following:

It may take a moment for the domain name and A Record to be propagated to all relevant DNS servers, and then for Let’s Encrypt to reach your domain and generate a certificate. Please be patient while this is updated.

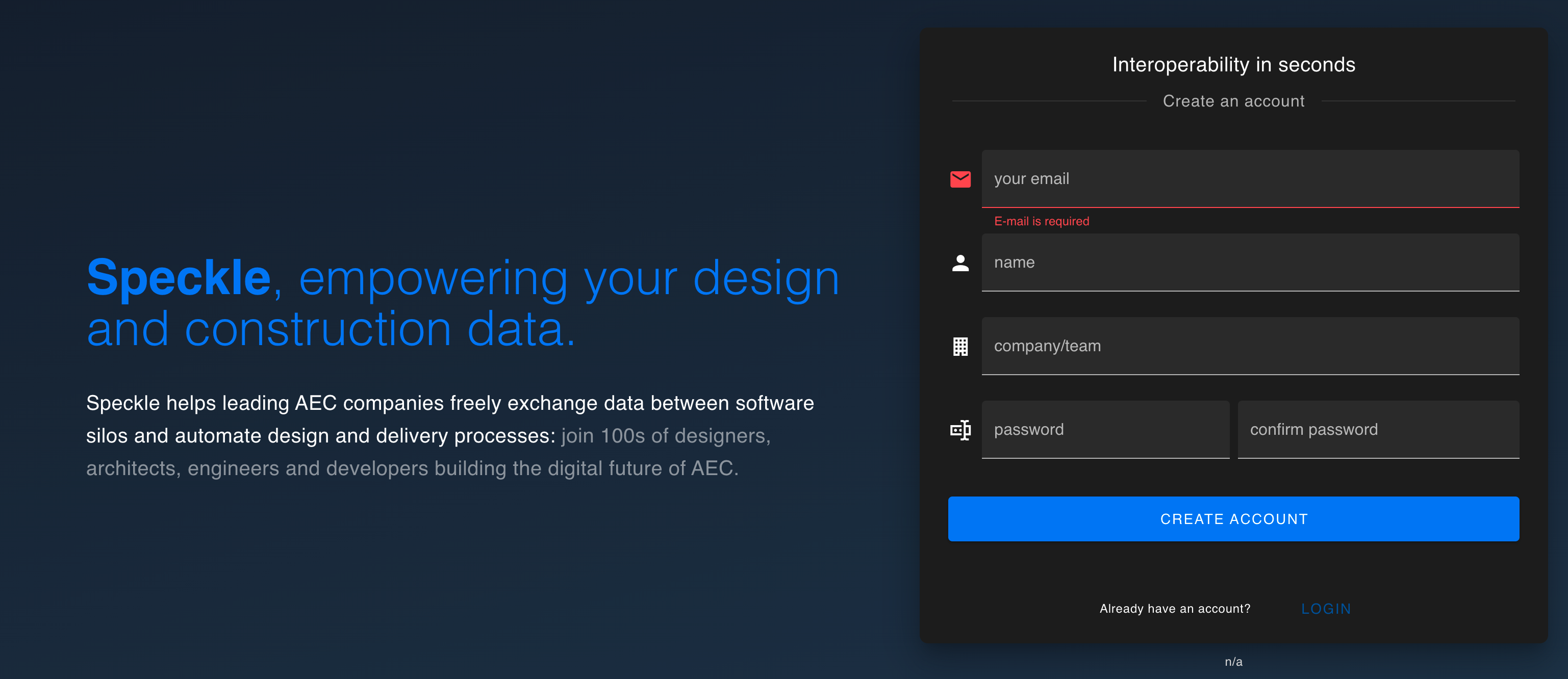

Create an account on your Server

You should be able to now visit your domain name and see the same Speckle welcome page.Finally, register the first user. The first user who registers will be the administrator account for that server.

That’s it

You have deployed a Speckle Server on a fully controlled Kubernetes cluster. To reconfigure the server, you can change the values invalues.yaml and run the following command. Replace ${YOUR_CLUSTER_CONTEXT_NAME} with the name of your cluster.:

Common Issues

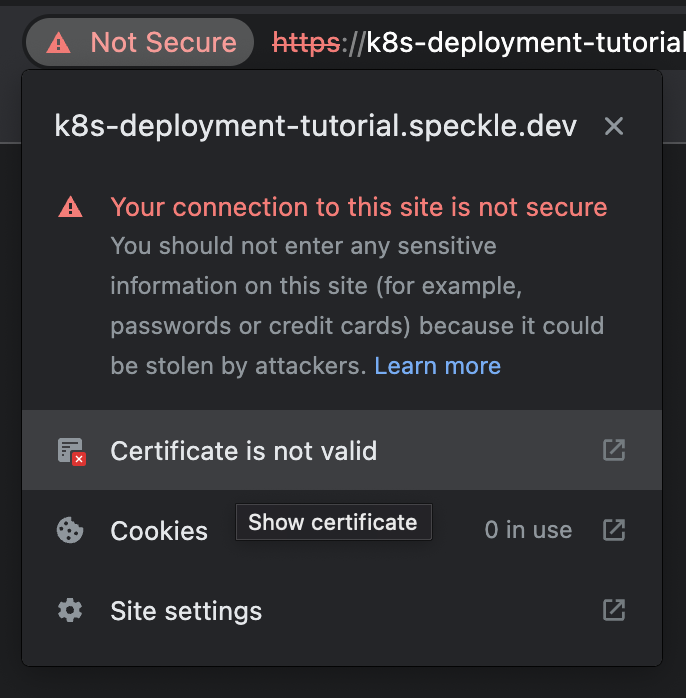

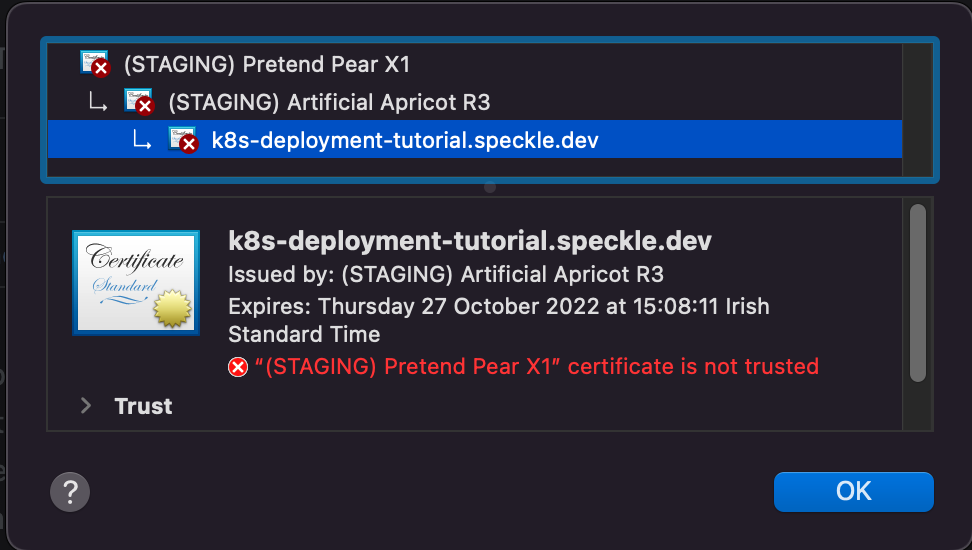

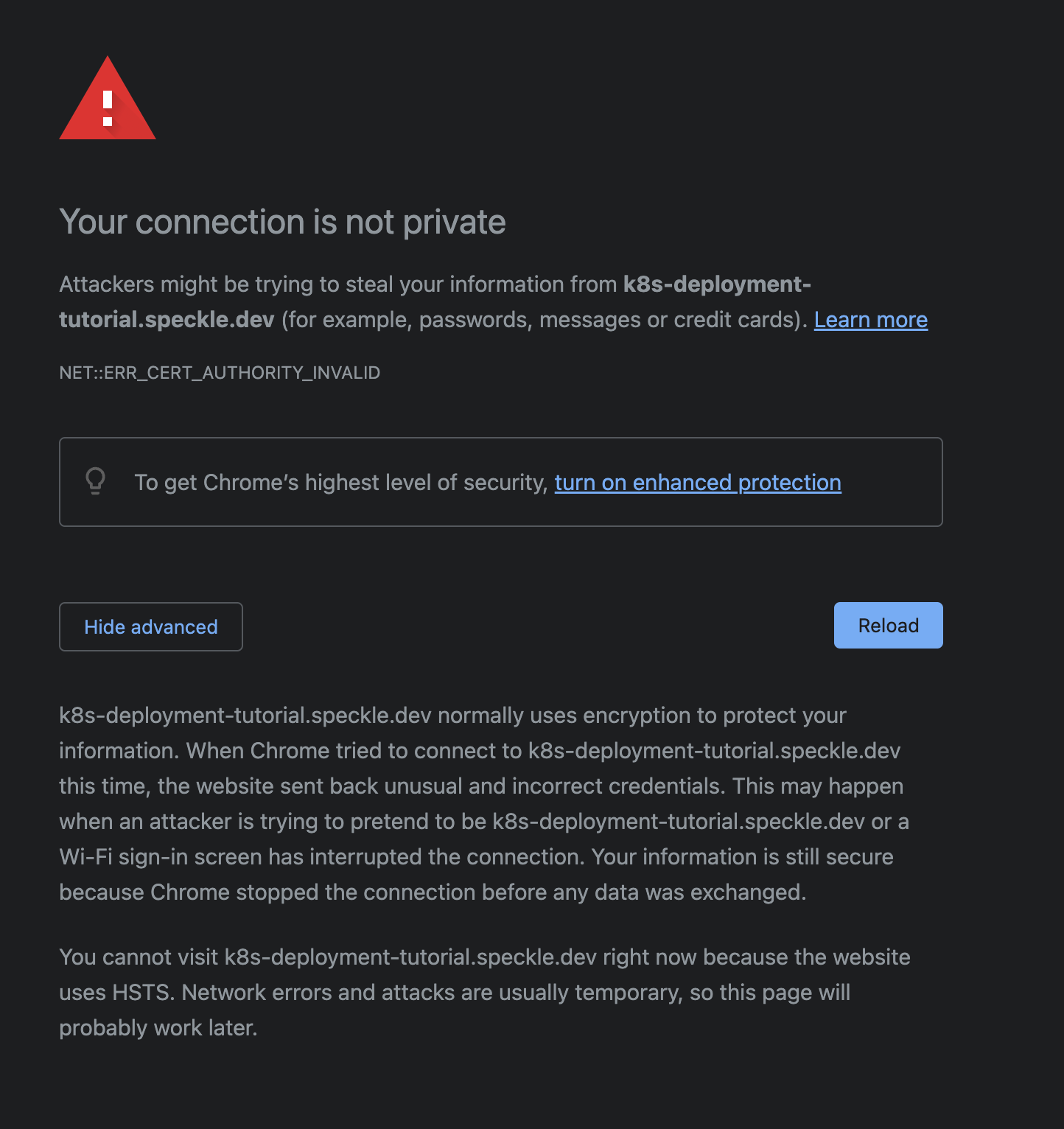

Untrusted Certificate

Your browser may not trust the certificate generated by Let’s Encrypt’s staging API. In Google’s Chrome browser, the warning will appear as follows:

(STAGING) Pretend Pear X1 and/or (STAGING) Bogus Broccoli X2. Click the Not Secure warning next to the address bar, then click Certificate is not valid for more details.